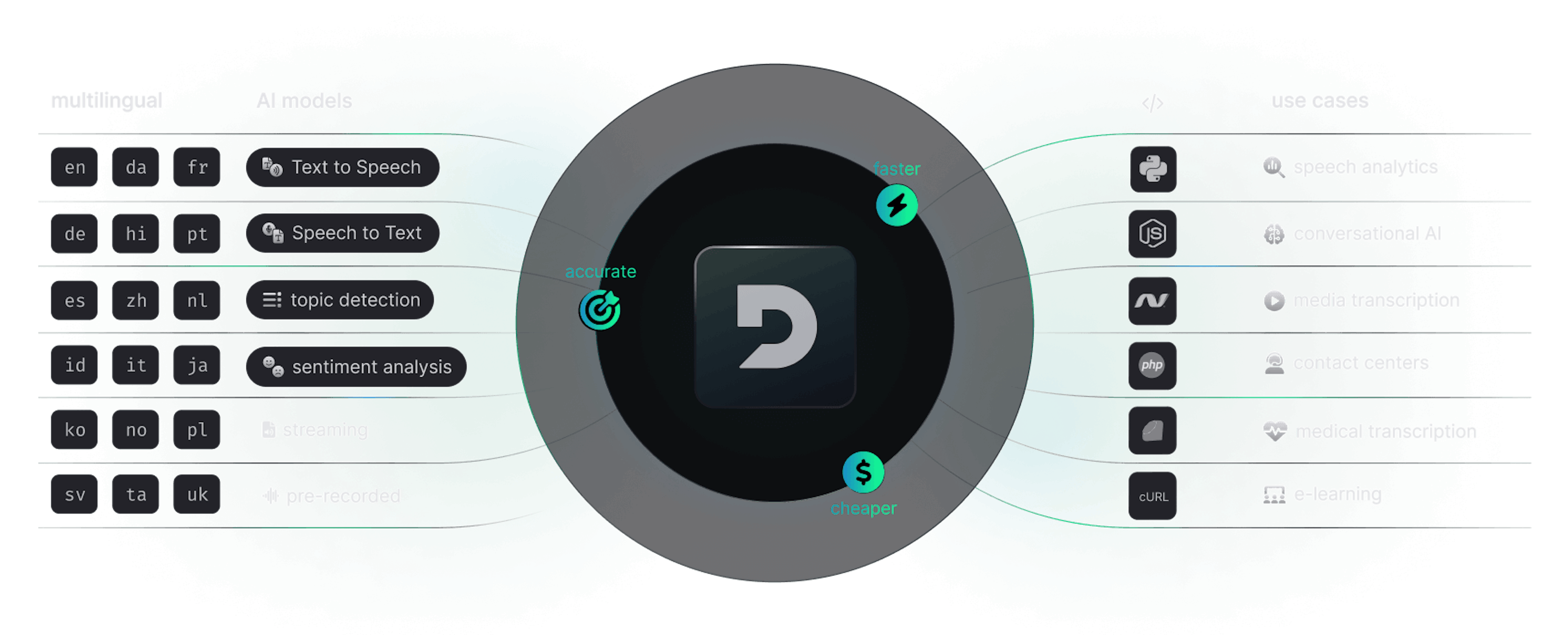

Build voice AI into your apps

Deepgram's voice AI platform provides APIs for speech-to-text, text-to-speech, and language understanding. From medical transcription to autonomous agents, Deepgram is the go-to choice for developers of voice AI experiences.

Try Deepgram API

Play around with human-like voice AI or transcribe sample audio files. Explore how our audio understanding models work.

Request URL

POST https://api.deepgram.com/v1/listenRequest Body

{

"metadata": {

"transaction_key": "deprecated",

"request_id": "34fced0b-21db-4b20-8808-7c09d8777826",

"sha256": "088e88dd76213db9b770768fe7ddc6bacde93217bb6b8129e10a8cda7c45a8d3",

"created": "2024-03-06T16:28:25.548Z",

"duration": 129.576,

"channels": 1,

"models": [

"30089e05-99d1-4376-b32e-c263170674af"

],

"model_info": {

"30089e05-99d1-4376-b32e-c263170674af": {

"name": "2-general-nova",

"version": "2024-01-09.29447",

"arch": "nova-2"

}

}

},

"results": {

"channels": [

{

"alternatives": [

{

"transcript": "Hi. Thank you so much for calling Nike. This is Allison. How can I help you today? Hey. I was supposed to receive a shoe order last Tuesday, and it's now Wednesday, a week later. So, like, what's going on? Oh, okay. I see. Could I have your order number, please? Yeah. It's 905933 679. Okay. Thank you so much for that. Okay. I apologize. It looks like your order there's been some inclement weather in, like, the Midwest, especially in the north, and your package got stuck at a facility in Wyoming because of that. But it looks like it's cleared since, and the package should be moved to New Jersey, and should be at your home address in 4 days, so it it should be arriving on Saturday. So it'll be arriving almost 2 weeks late instead of a week and a half late. Yeah. I I'm I'm really sorry about that. I can see here that, you know, you you're a long time customer with us, and, you know, you've placed a lot of orders. So if it's okay with you, on your next order that you placed through our website, because we messed up this time, I'd like to offer you a 40% discount on your next purchase with us. Yeah. I mean, that sounds good to me. Okay. That's good. Okay. Great. Okay. Your, yeah, your shoes should be there on Saturday, this coming Saturday. Is there anything else I can help you with at this time? No. Thank you. Okay. Great. And I added the discount to your account, so it should populate shortly. Thanks. Thank you. Bye.",

"confidence": 1,

"words": [

{

"word": "hi",

"start": 1.8399999,

"end": 2.24,

"confidence": 0.9995117,

"punctuated_word": "Hi."

},

{

"word": "thank",

"start": 2.56,

"end": 2.72,

"confidence": 0.9941406,

"punctuated_word": "Thank"

},

{

"word": "you",

"start": 2.72,

"end": 2.96,

"confidence": 1,

"punctuated_word": "you"

},

{

"word": "so",

"start": 2.96,

"end": 3.04,

"confidence": 1,

"punctuated_word": "so"

},

{

"word": "much",

"start": 3.04,

"end": 3.1999998,

"confidence": 1,

"punctuated_word": "much"

},

{

"word": "for",

"start": 3.1999998,

"end": 3.4399998,

"confidence": 1,

"punctuated_word": "for"

},

{

"word": "calling",

"start": 3.4399998,

"end": 3.76,

"confidence": 1,

"punctuated_word": "calling"

},

{

"word": "nike",

"start": 3.76,

"end": 4.24,

"confidence": 0.97631836,

"punctuated_word": "Nike."

},

{

"word": "this",

"start": 4.24,

"end": 4.48,

"confidence": 1,

"punctuated_word": "This"

},

{

"word": "is",

"start": 4.48,

"end": 4.64,

"confidence": 1,

"punctuated_word": "is"

},

{

"word": "allison",

"start": 4.64,

"end": 5.12,

"confidence": 0.84399414,

"punctuated_word": "Allison."

},

{

"word": "how",

"start": 5.12,

"end": 5.2799997,

"confidence": 1,

"punctuated_word": "How"

},

{

"word": "can",

"start": 5.2799997,

"end": 5.3599997,

"confidence": 1,

"punctuated_word": "can"

},

{

"word": "i",

"start": 5.3599997,

"end": 5.52,

"confidence": 1,

"punctuated_word": "I"

},

{

"word": "help",

"start": 5.52,

"end": 5.68,

"confidence": 1,

"punctuated_word": "help"

},

{

"word": "you",

"start": 5.68,

"end": 5.8399997,

"confidence": 1,

"punctuated_word": "you"

},

{

"word": "today",

"start": 5.8399997,

"end": 6.3399997,

"confidence": 1,

"punctuated_word": "today?"

},

{

"word": "hey",

"start": 7.2549996,

"end": 7.7549996,

"confidence": 0.9909668,

"punctuated_word": "Hey."

},

{

"word": "i",

"start": 8.934999,

"end": 9.434999,

"confidence": 1,

"punctuated_word": "I"

},

{

"word": "was",

"start": 9.655,

"end": 9.8949995,

"confidence": 1,

"punctuated_word": "was"

},

{

"word": "supposed",

"start": 9.8949995,

"end": 10.295,

"confidence": 0.9980469,

"punctuated_word": "supposed"

},

{

"word": "to",

"start": 10.295,

"end": 10.455,

"confidence": 1,

"punctuated_word": "to"

},

{

"word": "receive",

"start": 10.455,

"end": 10.955,

"confidence": 1,

"punctuated_word": "receive"

},

{

"word": "a",

"start": 12.775,

"end": 13.094999,

"confidence": 1,

"punctuated_word": "a"

},

{

"word": "shoe",

"start": 13.094999,

"end": 13.594999,

"confidence": 0.99902344,

"punctuated_word": "shoe"

},

{

"word": "order",

"start": 13.735,

"end": 14.235,

"confidence": 1,

"punctuated_word": "order"

},

{

"word": "last",

"start": 14.535,

"end": 14.855,

"confidence": 0.99902344,

"punctuated_word": "last"

},

{

"word": "tuesday",

"start": 14.855,

"end": 15.355,

"confidence": 0.98950195,

"punctuated_word": "Tuesday,"

},

{

"word": "and",

"start": 15.494999,

"end": 15.735001,

"confidence": 1,

"punctuated_word": "and"

},

{

"word": "it's",

"start": 15.735001,

"end": 16.16,

"confidence": 1,

"punctuated_word": "it's"

},

{

"word": "now",

"start": 16.4,

"end": 16.64,

"confidence": 1,

"punctuated_word": "now"

},

{

"word": "wednesday",

"start": 16.64,

"end": 17.14,

"confidence": 0.86035156,

"punctuated_word": "Wednesday,"

},

{

"word": "a",

"start": 17.76,

"end": 17.92,

"confidence": 0.9980469,

"punctuated_word": "a"

},

{

"word": "week",

"start": 17.92,

"end": 18.24,

"confidence": 1,

"punctuated_word": "week"

},

{

"word": "later",

"start": 18.24,

"end": 18.74,

"confidence": 0.98999023,

"punctuated_word": "later."

},

{

"word": "so",

"start": 19.36,

"end": 19.68,

"confidence": 0.9916992,

"punctuated_word": "So,"

},

{

"word": "like",

"start": 19.68,

"end": 20.18,

"confidence": 0.9995117,

"punctuated_word": "like,"

},

{

"word": "what's",

"start": 21.04,

"end": 21.36,

"confidence": 1,

"punctuated_word": "what's"

},

{

"word": "going",

"start": 21.36,

"end": 21.68,

"confidence": 1,

"punctuated_word": "going"

},

{

"word": "on",

"start": 21.68,

"end": 22.16,

"confidence": 0.9995117,

"punctuated_word": "on?"

},

{

"word": "oh",

"start": 22.16,

"end": 22.48,

"confidence": 0.9445801,

"punctuated_word": "Oh,"

},

{

"word": "okay",

"start": 22.48,

"end": 22.98,

"confidence": 0.99902344,

"punctuated_word": "okay."

},

{

"word": "i",

"start": 23.875,

"end": 24.035,

"confidence": 0.59521484,

"punctuated_word": "I"

},

{

"word": "see",

"start": 24.035,

"end": 24.435,

"confidence": 1,

"punctuated_word": "see."

},

{

"word": "could",

"start": 24.435,

"end": 24.835001,

"confidence": 0.99902344,

"punctuated_word": "Could"

},

{

"word": "i",

"start": 24.835001,

"end": 25.075,

"confidence": 1,

"punctuated_word": "I"

},

{

"word": "have",

"start": 25.075,

"end": 25.395,

"confidence": 1,

"punctuated_word": "have"

},

{

"word": "your",

"start": 25.395,

"end": 25.795,

"confidence": 1,

"punctuated_word": "your"

},

{

"word": "order",

"start": 25.795,

"end": 26.115,

"confidence": 1,

"punctuated_word": "order"

},

{

"word": "number",

"start": 26.115,

"end": 26.435,

"confidence": 0.9995117,

"punctuated_word": "number,"

},

{

"word": "please",

"start": 26.435,

"end": 26.935,

"confidence": 1,

"punctuated_word": "please?"

},

{

"word": "yeah",

"start": 27.555,

"end": 27.875,

"confidence": 1,

"punctuated_word": "Yeah."

},

{

"word": "it's",

"start": 27.875,

"end": 28.275,

"confidence": 1,

"punctuated_word": "It's"

},

{

"word": "905933",

"start": 28.275,

"end": 28.775,

"confidence": 0.8523763,

"punctuated_word": "905933"

},

{

"word": "679",

"start": 32.62,

"end": 33.12,

"confidence": 0.9873047,

"punctuated_word": "679."

},

{

"word": "okay",

"start": 34.46,

"end": 34.78,

"confidence": 0.99853516,

"punctuated_word": "Okay."

},

{

"word": "thank",

"start": 34.78,

"end": 34.94,

"confidence": 1,

"punctuated_word": "Thank"

},

{

"word": "you",

"start": 34.94,

"end": 35.1,

"confidence": 1,

"punctuated_word": "you"

},

{

"word": "so",

"start": 35.1,

"end": 35.26,

"confidence": 1,

"punctuated_word": "so"

},

{

"word": "much",

"start": 35.26,

"end": 35.42,

"confidence": 1,

"punctuated_word": "much"

},

{

"word": "for",

"start": 35.42,

"end": 35.579998,

"confidence": 1,

"punctuated_word": "for"

},

{

"word": "that",

"start": 35.579998,

"end": 36.079998,

"confidence": 1,

"punctuated_word": "that."

},

{

"word": "okay",

"start": 39.395,

"end": 39.895,

"confidence": 0.98657227,

"punctuated_word": "Okay."

},

{

"word": "i",

"start": 41.155,

"end": 41.395,

"confidence": 1,

"punctuated_word": "I"

},

{

"word": "apologize",

"start": 41.395,

"end": 41.895,

"confidence": 1,

"punctuated_word": "apologize."

},

{

"word": "it",

"start": 42.434998,

"end": 42.675,

"confidence": 1,

"punctuated_word": "It"

},

{

"word": "looks",

"start": 42.675,

"end": 43.074997,

"confidence": 1,

"punctuated_word": "looks"

},

{

"word": "like",

"start": 43.074997,

"end": 43.315,

"confidence": 1,

"punctuated_word": "like"

},

{

"word": "your",

"start": 43.315,

"end": 43.555,

"confidence": 1,

"punctuated_word": "your"

},

{

"word": "order",

"start": 43.555,

"end": 43.715,

"confidence": 1,

"punctuated_word": "order"

},

{

"word": "there's",

"start": 45.235,

"end": 45.635,

"confidence": 0.93237305,

"punctuated_word": "there's"

},

{

"word": "been",

"start": 45.635,

"end": 45.875,

"confidence": 0.99902344,

"punctuated_word": "been"

},

{

"word": "some",

"start": 45.875,

"end": 46.274998,

"confidence": 0.9980469,

"punctuated_word": "some"

},

{

"word": "inclement",

"start": 46.274998,

"end": 46.774998,

"confidence": 1,

"punctuated_word": "inclement"

},

{

"word": "weather",

"start": 46.995,

"end": 47.495,

"confidence": 1,

"punctuated_word": "weather"

},

{

"word": "in",

"start": 47.635,

"end": 48.035,

"confidence": 0.99609375,

"punctuated_word": "in,"

},

{

"word": "like",

"start": 48.035,

"end": 48.274998,

"confidence": 0.9995117,

"punctuated_word": "like,"

},

{

"word": "the",

"start": 48.274998,

"end": 48.434998,

"confidence": 1,

"punctuated_word": "the"

},

{

"word": "midwest",

"start": 48.434998,

"end": 48.934998,

"confidence": 0.9873047,

"punctuated_word": "Midwest,"

},

{

"word": "especially",

"start": 49.38,

"end": 49.62,

"confidence": 1,

"punctuated_word": "especially"

},

{

"word": "in",

"start": 49.62,

"end": 49.7,

"confidence": 0.99609375,

"punctuated_word": "in"

},

{

"word": "the",

"start": 49.7,

"end": 49.86,

"confidence": 1,

"punctuated_word": "the"

},

{

"word": "north",

"start": 49.86,

"end": 50.36,

"confidence": 0.6791992,

"punctuated_word": "north,"

},

{

"word": "and",

"start": 52.02,

"end": 52.34,

"confidence": 0.99902344,

"punctuated_word": "and"

},

{

"word": "your",

"start": 52.34,

"end": 52.579998,

"confidence": 1,

"punctuated_word": "your"

},

{

"word": "package",

"start": 52.579998,

"end": 53.079998,

"confidence": 1,

"punctuated_word": "package"

},

{

"word": "got",

"start": 53.14,

"end": 53.379997,

"confidence": 1,

"punctuated_word": "got"

},

{

"word": "stuck",

"start": 53.379997,

"end": 53.879997,

"confidence": 1,

"punctuated_word": "stuck"

},

{

"word": "at",

"start": 54.18,

"end": 54.42,

"confidence": 1,

"punctuated_word": "at"

},

{

"word": "a",

"start": 54.42,

"end": 54.66,

"confidence": 1,

"punctuated_word": "a"

},

{

"word": "facility",

"start": 54.66,

"end": 55.14,

"confidence": 1,

"punctuated_word": "facility"

},

{

"word": "in",

"start": 55.14,

"end": 55.3,

"confidence": 1,

"punctuated_word": "in"

},

{

"word": "wyoming",

"start": 55.3,

"end": 55.8,

"confidence": 1,

"punctuated_word": "Wyoming"

},

{

"word": "because",

"start": 56.02,

"end": 56.26,

"confidence": 0.9980469,

"punctuated_word": "because"

},

{

"word": "of",

"start": 56.26,

"end": 56.34,

"confidence": 1,

"punctuated_word": "of"

},

{

"word": "that",

"start": 56.34,

"end": 56.84,

"confidence": 0.9387207,

"punctuated_word": "that."

},

{

"word": "but",

"start": 57.735,

"end": 57.975,

"confidence": 0.6323242,

"punctuated_word": "But"

},

{

"word": "it",

"start": 57.975,

"end": 58.055,

"confidence": 0.99902344,

"punctuated_word": "it"

},

{

"word": "looks",

"start": 58.055,

"end": 58.295,

"confidence": 1,

"punctuated_word": "looks"

},

{

"word": "like",

"start": 58.295,

"end": 58.454998,

"confidence": 0.99902344,

"punctuated_word": "like"

},

{

"word": "it's",

"start": 58.454998,

"end": 58.855,

"confidence": 0.99902344,

"punctuated_word": "it's"

},

{

"word": "cleared",

"start": 58.855,

"end": 59.355,

"confidence": 1,

"punctuated_word": "cleared"

},

{

"word": "since",

"start": 59.735,

"end": 60.235,

"confidence": 0.907959,

"punctuated_word": "since,"

},

{

"word": "and",

"start": 60.614998,

"end": 61.114998,

"confidence": 0.99902344,

"punctuated_word": "and"

},

{

"word": "the",

"start": 61.815,

"end": 62.055,

"confidence": 1,

"punctuated_word": "the"

},

{

"word": "package",

"start": 62.055,

"end": 62.535,

"confidence": 0.99902344,

"punctuated_word": "package"

},

{

"word": "should",

"start": 62.535,

"end": 62.695,

"confidence": 0.99902344,

"punctuated_word": "should"

},

{

"word": "be",

"start": 62.695,

"end": 62.855,

"confidence": 1,

"punctuated_word": "be"

},

{

"word": "moved",

"start": 62.855,

"end": 63.355,

"confidence": 1,

"punctuated_word": "moved"

},

{

"word": "to",

"start": 63.574997,

"end": 63.895,

"confidence": 1,

"punctuated_word": "to"

},

{

"word": "new",

"start": 63.895,

"end": 64.055,

"confidence": 1,

"punctuated_word": "New"

},

{

"word": "jersey",

"start": 64.055,

"end": 64.555,

"confidence": 0.90771484,

"punctuated_word": "Jersey,"

},

{

"word": "and",

"start": 65.095,

"end": 65.335,

"confidence": 0.94628906,

"punctuated_word": "and"

},

{

"word": "should",

"start": 65.335,

"end": 65.575,

"confidence": 0.9941406,

"punctuated_word": "should"

},

{

"word": "be",

"start": 65.575,

"end": 66.075,

"confidence": 0.99902344,

"punctuated_word": "be"

},

{

"word": "at",

"start": 66.560005,

"end": 66.72,

"confidence": 1,

"punctuated_word": "at"

},

{

"word": "your",

"start": 66.72,

"end": 66.8,

"confidence": 1,

"punctuated_word": "your"

},

{

"word": "home",

"start": 66.8,

"end": 67.12,

"confidence": 1,

"punctuated_word": "home"

},

{

"word": "address",

"start": 67.12,

"end": 67.62,

"confidence": 1,

"punctuated_word": "address"

},

{

"word": "in",

"start": 67.68,

"end": 68.08,

"confidence": 1,

"punctuated_word": "in"

},

{

"word": "4",

"start": 68.08,

"end": 68.32,

"confidence": 0.984375,

"punctuated_word": "4"

},

{

"word": "days",

"start": 68.32,

"end": 68.82,

"confidence": 0.7602539,

"punctuated_word": "days,"

},

{

"word": "so",

"start": 68.880005,

"end": 69.28,

"confidence": 0.99902344,

"punctuated_word": "so"

},

{

"word": "it",

"start": 69.28,

"end": 69.44,

"confidence": 0.99902344,

"punctuated_word": "it"

},

{

"word": "it",

"start": 69.44,

"end": 69.6,

"confidence": 0.921875,

"punctuated_word": "it"

},

{

"word": "should",

"start": 69.6,

"end": 70.08,

"confidence": 0.99902344,

"punctuated_word": "should"

},

{

"word": "be",

"start": 70.08,

"end": 70.32,

"confidence": 1,

"punctuated_word": "be"

},

{

"word": "arriving",

"start": 70.32,

"end": 70.8,

"confidence": 1,

"punctuated_word": "arriving"

},

{

"word": "on",

"start": 70.8,

"end": 71.200005,

"confidence": 1,

"punctuated_word": "on"

},

{

"word": "saturday",

"start": 71.200005,

"end": 71.700005,

"confidence": 1,

"punctuated_word": "Saturday."

},

{

"word": "so",

"start": 72.880005,

"end": 73.04,

"confidence": 0.9970703,

"punctuated_word": "So"

},

{

"word": "it'll",

"start": 73.04,

"end": 73.36,

"confidence": 0.9921875,

"punctuated_word": "it'll"

},

{

"word": "be",

"start": 73.36,

"end": 73.68,

"confidence": 0.99902344,

"punctuated_word": "be"

},

{

"word": "arriving",

"start": 73.68,

"end": 74.18,

"confidence": 1,

"punctuated_word": "arriving"

},

{

"word": "almost",

"start": 74.715,

"end": 74.955,

"confidence": 1,

"punctuated_word": "almost"

},

{

"word": "2",

"start": 74.955,

"end": 75.275,

"confidence": 0.8671875,

"punctuated_word": "2"

},

{

"word": "weeks",

"start": 75.275,

"end": 75.515,

"confidence": 1,

"punctuated_word": "weeks"

},

{

"word": "late",

"start": 75.515,

"end": 75.915,

"confidence": 0.9970703,

"punctuated_word": "late"

},

{

"word": "instead",

"start": 75.915,

"end": 76.235,

"confidence": 0.9951172,

"punctuated_word": "instead"

},

{

"word": "of",

"start": 76.235,

"end": 76.475,

"confidence": 0.9980469,

"punctuated_word": "of"

},

{

"word": "a",

"start": 76.475,

"end": 76.715,

"confidence": 0.9873047,

"punctuated_word": "a"

},

{

"word": "week",

"start": 76.715,

"end": 76.955,

"confidence": 1,

"punctuated_word": "week"

},

{

"word": "and",

"start": 76.955,

"end": 77.034996,

"confidence": 0.984375,

"punctuated_word": "and"

},

{

"word": "a",

"start": 77.034996,

"end": 77.115,

"confidence": 0.98291016,

"punctuated_word": "a"

},

{

"word": "half",

"start": 77.115,

"end": 77.435,

"confidence": 0.9941406,

"punctuated_word": "half"

},

{

"word": "late",

"start": 77.435,

"end": 77.935,

"confidence": 0.68603516,

"punctuated_word": "late."

},

{

"word": "yeah",

"start": 77.994995,

"end": 78.395,

"confidence": 0.9995117,

"punctuated_word": "Yeah."

},

{

"word": "i",

"start": 78.395,

"end": 78.634995,

"confidence": 0.99902344,

"punctuated_word": "I"

},

{

"word": "i'm",

"start": 78.715,

"end": 78.955,

"confidence": 1,

"punctuated_word": "I'm"

},

{

"word": "i'm",

"start": 78.955,

"end": 79.195,

"confidence": 0.9995117,

"punctuated_word": "I'm"

},

{

"word": "really",

"start": 79.195,

"end": 79.515,

"confidence": 1,

"punctuated_word": "really"

},

{

"word": "sorry",

"start": 79.515,

"end": 79.835,

"confidence": 1,

"punctuated_word": "sorry"

},

{

"word": "about",

"start": 79.835,

"end": 80.075,

"confidence": 1,

"punctuated_word": "about"

},

{

"word": "that",

"start": 80.075,

"end": 80.555,

"confidence": 0.9995117,

"punctuated_word": "that."

},

{

"word": "i",

"start": 81.115,

"end": 81.275,

"confidence": 1,

"punctuated_word": "I"

},

{

"word": "can",

"start": 81.275,

"end": 81.515,

"confidence": 0.9970703,

"punctuated_word": "can"

},

{

"word": "see",

"start": 81.515,

"end": 81.755,

"confidence": 1,

"punctuated_word": "see"

},

{

"word": "here",

"start": 81.755,

"end": 81.994995,

"confidence": 1,

"punctuated_word": "here"

},

{

"word": "that",

"start": 81.994995,

"end": 82.494995,

"confidence": 0.99560547,

"punctuated_word": "that,"

},

{

"word": "you",

"start": 82.71,

"end": 82.79,

"confidence": 1,

"punctuated_word": "you"

},

{

"word": "know",

"start": 82.79,

"end": 83.03,

"confidence": 1,

"punctuated_word": "know,"

},

{

"word": "you",

"start": 83.03,

"end": 83.11,

"confidence": 1,

"punctuated_word": "you"

},

{

"word": "you're",

"start": 83.35,

"end": 83.59,

"confidence": 0.9995117,

"punctuated_word": "you're"

},

{

"word": "a",

"start": 83.59,

"end": 83.75,

"confidence": 0.99902344,

"punctuated_word": "a"

},

{

"word": "long",

"start": 83.75,

"end": 83.909996,

"confidence": 0.82128906,

"punctuated_word": "long"

},

{

"word": "time",

"start": 83.909996,

"end": 84.229996,

"confidence": 0.9980469,

"punctuated_word": "time"

},

{

"word": "customer",

"start": 84.229996,

"end": 84.71,

"confidence": 0.99902344,

"punctuated_word": "customer"

},

{

"word": "with",

"start": 84.71,

"end": 84.95,

"confidence": 1,

"punctuated_word": "with"

},

{

"word": "us",

"start": 84.95,

"end": 85.45,

"confidence": 0.795166,

"punctuated_word": "us,"

},

{

"word": "and",

"start": 85.829994,

"end": 86.07,

"confidence": 0.98657227,

"punctuated_word": "and,"

},

{

"word": "you",

"start": 86.07,

"end": 86.149994,

"confidence": 0.9970703,

"punctuated_word": "you"

},

{

"word": "know",

"start": 86.149994,

"end": 86.39,

"confidence": 1,

"punctuated_word": "know,"

},

{

"word": "you've",

"start": 86.39,

"end": 86.63,

"confidence": 0.9995117,

"punctuated_word": "you've"

},

{

"word": "placed",

"start": 86.63,

"end": 86.95,

"confidence": 1,

"punctuated_word": "placed"

},

{

"word": "a",

"start": 86.95,

"end": 87.03,

"confidence": 0.99902344,

"punctuated_word": "a"

},

{

"word": "lot",

"start": 87.03,

"end": 87.189995,

"confidence": 1,

"punctuated_word": "lot"

},

{

"word": "of",

"start": 87.189995,

"end": 87.35,

"confidence": 1,

"punctuated_word": "of"

},

{

"word": "orders",

"start": 87.35,

"end": 87.85,

"confidence": 0.9746094,

"punctuated_word": "orders."

},

{

"word": "so",

"start": 89.43,

"end": 89.75,

"confidence": 0.9526367,

"punctuated_word": "So"

},

{

"word": "if",

"start": 89.75,

"end": 89.99,

"confidence": 0.9941406,

"punctuated_word": "if"

},

{

"word": "it's",

"start": 89.99,

"end": 90.149994,

"confidence": 1,

"punctuated_word": "it's"

},

{

"word": "okay",

"start": 90.149994,

"end": 90.39,

"confidence": 1,

"punctuated_word": "okay"

},

{

"word": "with",

"start": 90.39,

"end": 90.549995,

"confidence": 1,

"punctuated_word": "with"

},

{

"word": "you",

"start": 90.549995,

"end": 91.025,

"confidence": 0.9885254,

"punctuated_word": "you,"

},

{

"word": "on",

"start": 91.185005,

"end": 91.425,

"confidence": 0.9980469,

"punctuated_word": "on"

},

{

"word": "your",

"start": 91.425,

"end": 91.505005,

"confidence": 1,

"punctuated_word": "your"

},

{

"word": "next",

"start": 91.505005,

"end": 91.825005,

"confidence": 1,

"punctuated_word": "next"

},

{

"word": "order",

"start": 91.825005,

"end": 92.065,

"confidence": 1,

"punctuated_word": "order"

},

{

"word": "that",

"start": 92.065,

"end": 92.225,

"confidence": 1,

"punctuated_word": "that"

},

{

"word": "you",

"start": 92.225,

"end": 92.465004,

"confidence": 0.99902344,

"punctuated_word": "you"

},

{

"word": "placed",

"start": 92.465004,

"end": 92.625,

"confidence": 0.5258789,

"punctuated_word": "placed"

},

{

"word": "through",

"start": 92.625,

"end": 92.865,

"confidence": 0.9267578,

"punctuated_word": "through"

},

{

"word": "our",

"start": 92.865,

"end": 92.945,

"confidence": 0.9760742,

"punctuated_word": "our"

},

{

"word": "website",

"start": 92.945,

"end": 93.445,

"confidence": 0.9880371,

"punctuated_word": "website,"

},

{

"word": "because",

"start": 93.905,

"end": 94.405,

"confidence": 1,

"punctuated_word": "because"

},

{

"word": "we",

"start": 94.865,

"end": 95.365,

"confidence": 1,

"punctuated_word": "we"

},

{

"word": "messed",

"start": 95.665,

"end": 95.985,

"confidence": 1,

"punctuated_word": "messed"

},

{

"word": "up",

"start": 95.985,

"end": 96.145004,

"confidence": 0.99902344,

"punctuated_word": "up"

},

{

"word": "this",

"start": 96.145004,

"end": 96.385,

"confidence": 1,

"punctuated_word": "this"

},

{

"word": "time",

"start": 96.385,

"end": 96.885,

"confidence": 0.9506836,

"punctuated_word": "time,"

},

{

"word": "i'd",

"start": 97.185,

"end": 97.425,

"confidence": 0.99902344,

"punctuated_word": "I'd"

},

{

"word": "like",

"start": 97.425,

"end": 97.585,

"confidence": 1,

"punctuated_word": "like"

},

{

"word": "to",

"start": 97.585,

"end": 97.985,

"confidence": 0.99902344,

"punctuated_word": "to"

},

{

"word": "offer",

"start": 97.985,

"end": 98.225,

"confidence": 1,

"punctuated_word": "offer"

},

{

"word": "you",

"start": 98.225,

"end": 98.465004,

"confidence": 1,

"punctuated_word": "you"

},

{

"word": "a",

"start": 98.465004,

"end": 98.625,

"confidence": 1,

"punctuated_word": "a"

},

{

"word": "40%",

"start": 98.625,

"end": 99.125,

"confidence": 0.9916992,

"punctuated_word": "40%"

},

{

"word": "discount",

"start": 99.265,

"end": 99.765,

"confidence": 1,

"punctuated_word": "discount"

},

{

"word": "on",

"start": 99.825005,

"end": 100.065,

"confidence": 1,

"punctuated_word": "on"

},

{

"word": "your",

"start": 100.065,

"end": 100.225,

"confidence": 1,

"punctuated_word": "your"

},

{

"word": "next",

"start": 100.225,

"end": 100.545,

"confidence": 1,

"punctuated_word": "next"

},

{

"word": "purchase",

"start": 100.545,

"end": 101.01,

"confidence": 1,

"punctuated_word": "purchase"

},

{

"word": "with",

"start": 101.01,

"end": 101.25,

"confidence": 1,

"punctuated_word": "with"

},

{

"word": "us",

"start": 101.25,

"end": 101.75,

"confidence": 0.9995117,

"punctuated_word": "us."

},

{

"word": "yeah",

"start": 103.73,

"end": 104.130005,

"confidence": 0.91259766,

"punctuated_word": "Yeah."

},

{

"word": "i",

"start": 104.130005,

"end": 104.21,

"confidence": 1,

"punctuated_word": "I"

},

{

"word": "mean",

"start": 104.21,

"end": 104.61,

"confidence": 0.9941406,

"punctuated_word": "mean,"

},

{

"word": "that",

"start": 104.61,

"end": 104.85,

"confidence": 0.9980469,

"punctuated_word": "that"

},

{

"word": "sounds",

"start": 104.85,

"end": 105.25,

"confidence": 0.9951172,

"punctuated_word": "sounds"

},

{

"word": "good",

"start": 105.25,

"end": 105.490005,

"confidence": 1,

"punctuated_word": "good"

},

{

"word": "to",

"start": 105.490005,

"end": 105.57,

"confidence": 1,

"punctuated_word": "to"

},

{

"word": "me",

"start": 105.57,

"end": 106.07,

"confidence": 0.99853516,

"punctuated_word": "me."

},

{

"word": "okay",

"start": 106.29,

"end": 106.69,

"confidence": 0.99902344,

"punctuated_word": "Okay."

},

{

"word": "that's",

"start": 106.69,

"end": 107.090004,

"confidence": 0.9995117,

"punctuated_word": "That's"

},

{

"word": "good",

"start": 107.090004,

"end": 107.490005,

"confidence": 0.8005371,

"punctuated_word": "good."

},

{

"word": "okay",

"start": 107.490005,

"end": 107.810005,

"confidence": 0.9970703,

"punctuated_word": "Okay."

},

{

"word": "great",

"start": 107.810005,

"end": 108.265,

"confidence": 0.99853516,

"punctuated_word": "Great."

},

{

"word": "okay",

"start": 110.265,

"end": 110.765,

"confidence": 0.98583984,

"punctuated_word": "Okay."

},

{

"word": "your",

"start": 111.385,

"end": 111.885,

"confidence": 0.9055176,

"punctuated_word": "Your,"

},

{

"word": "yeah",

"start": 112.585,

"end": 112.825,

"confidence": 0.9921875,

"punctuated_word": "yeah,"

},

{

"word": "your",

"start": 112.825,

"end": 113.065,

"confidence": 0.99902344,

"punctuated_word": "your"

},

{

"word": "shoes",

"start": 113.065,

"end": 113.385,

"confidence": 0.5629883,

"punctuated_word": "shoes"

},

{

"word": "should",

"start": 113.385,

"end": 113.465,

"confidence": 1,

"punctuated_word": "should"

},

{

"word": "be",

"start": 113.465,

"end": 113.625,

"confidence": 1,

"punctuated_word": "be"

},

{

"word": "there",

"start": 113.625,

"end": 113.865,

"confidence": 1,

"punctuated_word": "there"

},

{

"word": "on",

"start": 113.865,

"end": 114.365,

"confidence": 1,

"punctuated_word": "on"

},

{

"word": "saturday",

"start": 114.665,

"end": 115.165,

"confidence": 0.88183594,

"punctuated_word": "Saturday,"

},

{

"word": "this",

"start": 115.305,

"end": 115.465,

"confidence": 1,

"punctuated_word": "this"

},

{

"word": "coming",

"start": 115.465,

"end": 115.784996,

"confidence": 1,

"punctuated_word": "coming"

},

{

"word": "saturday",

"start": 115.784996,

"end": 116.284996,

"confidence": 1,

"punctuated_word": "Saturday."

},

{

"word": "is",

"start": 117.14001,

"end": 117.22,

"confidence": 0.7470703,

"punctuated_word": "Is"

},

{

"word": "there",

"start": 117.22,

"end": 117.46001,

"confidence": 1,

"punctuated_word": "there"

},

{

"word": "anything",

"start": 117.46001,

"end": 117.78001,

"confidence": 1,

"punctuated_word": "anything"

},

{

"word": "else",

"start": 117.78001,

"end": 118.26,

"confidence": 1,

"punctuated_word": "else"

},

{

"word": "i",

"start": 118.26,

"end": 118.5,

"confidence": 1,

"punctuated_word": "I"

},

{

"word": "can",

"start": 118.5,

"end": 118.98,

"confidence": 1,

"punctuated_word": "can"

},

{

"word": "help",

"start": 118.98,

"end": 119.3,

"confidence": 1,

"punctuated_word": "help"

},

{

"word": "you",

"start": 119.3,

"end": 119.380005,

"confidence": 1,

"punctuated_word": "you"

},

{

"word": "with",

"start": 119.380005,

"end": 119.880005,

"confidence": 1,

"punctuated_word": "with"

},

{

"word": "at",

"start": 119.94,

"end": 120.100006,

"confidence": 0.99902344,

"punctuated_word": "at"

},

{

"word": "this",

"start": 120.100006,

"end": 120.26,

"confidence": 1,

"punctuated_word": "this"

},

{

"word": "time",

"start": 120.26,

"end": 120.76,

"confidence": 1,

"punctuated_word": "time?"

},

{

"word": "no",

"start": 121.22,

"end": 121.54,

"confidence": 0.9926758,

"punctuated_word": "No."

},

{

"word": "thank",

"start": 121.54,

"end": 121.78001,

"confidence": 1,

"punctuated_word": "Thank"

},

{

"word": "you",

"start": 121.78001,

"end": 122.26,

"confidence": 1,

"punctuated_word": "you."

},

{

"word": "okay",

"start": 122.26,

"end": 122.58,

"confidence": 1,

"punctuated_word": "Okay."

},

{

"word": "great",

"start": 122.58,

"end": 122.9,

"confidence": 0.9995117,

"punctuated_word": "Great."

},

{

"word": "and",

"start": 122.9,

"end": 123.060005,

"confidence": 1,

"punctuated_word": "And"

},

{

"word": "i",

"start": 123.060005,

"end": 123.22,

"confidence": 1,

"punctuated_word": "I"

},

{

"word": "added",

"start": 123.22,

"end": 123.54,

"confidence": 1,

"punctuated_word": "added"

},

{

"word": "the",

"start": 123.54,

"end": 123.700005,

"confidence": 0.9970703,

"punctuated_word": "the"

},

{

"word": "discount",

"start": 123.700005,

"end": 124.18,

"confidence": 1,

"punctuated_word": "discount"

},

{

"word": "to",

"start": 124.18,

"end": 124.420006,

"confidence": 1,

"punctuated_word": "to"

},

{

"word": "your",

"start": 124.420006,

"end": 124.66,

"confidence": 1,

"punctuated_word": "your"

},

{

"word": "account",

"start": 124.66,

"end": 124.98,

"confidence": 0.935791,

"punctuated_word": "account,"

},

{

"word": "so",

"start": 124.98,

"end": 125.3,

"confidence": 1,

"punctuated_word": "so"

},

{

"word": "it",

"start": 125.3,

"end": 125.46001,

"confidence": 1,

"punctuated_word": "it"

},

{

"word": "should",

"start": 125.46001,

"end": 125.700005,

"confidence": 1,

"punctuated_word": "should"

},

{

"word": "populate",

"start": 125.700005,

"end": 126.18,

"confidence": 1,

"punctuated_word": "populate"

},

{

"word": "shortly",

"start": 126.18,

"end": 126.641,

"confidence": 1,

"punctuated_word": "shortly."

},

{

"word": "thanks",

"start": 127.201,

"end": 127.701,

"confidence": 1,

"punctuated_word": "Thanks."

},

{

"word": "thank",

"start": 127.840996,

"end": 128.081,

"confidence": 1,

"punctuated_word": "Thank"

},

{

"word": "you",

"start": 128.081,

"end": 128.561,

"confidence": 1,

"punctuated_word": "you."

},

{

"word": "bye",

"start": 128.561,

"end": 129.061,

"confidence": 0.9975586,

"punctuated_word": "Bye."

}

],

"paragraphs": {

"transcript": "\nHi. Thank you so much for calling Nike. This is Allison. How can I help you today? Hey.\n\nI was supposed to receive a shoe order last Tuesday, and it's now Wednesday, a week later. So, like, what's going on? Oh, okay. I see. Could I have your order number, please?\n\nYeah. It's 905933 679. Okay. Thank you so much for that. Okay.\n\nI apologize. It looks like your order there's been some inclement weather in, like, the Midwest, especially in the north, and your package got stuck at a facility in Wyoming because of that. But it looks like it's cleared since, and the package should be moved to New Jersey, and should be at your home address in 4 days, so it it should be arriving on Saturday. So it'll be arriving almost 2 weeks late instead of a week and a half late. Yeah.\n\nI I'm I'm really sorry about that. I can see here that, you know, you you're a long time customer with us, and, you know, you've placed a lot of orders. So if it's okay with you, on your next order that you placed through our website, because we messed up this time, I'd like to offer you a 40% discount on your next purchase with us. Yeah. I mean, that sounds good to me.\n\nOkay. That's good. Okay. Great. Okay.\n\nYour, yeah, your shoes should be there on Saturday, this coming Saturday. Is there anything else I can help you with at this time? No. Thank you. Okay.\n\nGreat. And I added the discount to your account, so it should populate shortly. Thanks. Thank you. Bye.",

"paragraphs": [

{

"sentences": [

{

"text": "Hi.",

"start": 1.8399999,

"end": 2.24

},

{

"text": "Thank you so much for calling Nike.",

"start": 2.56,

"end": 4.24

},

{

"text": "This is Allison.",

"start": 4.24,

"end": 5.12

},

{

"text": "How can I help you today?",

"start": 5.12,

"end": 6.3399997

},

{

"text": "Hey.",

"start": 7.2549996,

"end": 7.7549996

}

],

"num_words": 18,

"start": 1.8399999,

"end": 7.7549996

},

{

"sentences": [

{

"text": "I was supposed to receive a shoe order last Tuesday, and it's now Wednesday, a week later.",

"start": 8.934999,

"end": 18.74

},

{

"text": "So, like, what's going on?",

"start": 19.36,

"end": 22.16

},

{

"text": "Oh, okay.",

"start": 22.16,

"end": 22.98

},

{

"text": "I see.",

"start": 23.875,

"end": 24.435

},

{

"text": "Could I have your order number, please?",

"start": 24.435,

"end": 26.935

}

],

"num_words": 33,

"start": 8.934999,

"end": 26.935

},

{

"sentences": [

{

"text": "Yeah.",

"start": 27.555,

"end": 27.875

},

{

"text": "It's 905933 679.",

"start": 27.875,

"end": 33.12

},

{

"text": "Okay.",

"start": 34.46,

"end": 34.78

},

{

"text": "Thank you so much for that.",

"start": 34.78,

"end": 36.079998

},

{

"text": "Okay.",

"start": 39.395,

"end": 39.895

}

],

"num_words": 12,

"start": 27.555,

"end": 39.895

},

{

"sentences": [

{

"text": "I apologize.",

"start": 41.155,

"end": 41.895

},

{

"text": "It looks like your order there's been some inclement weather in, like, the Midwest, especially in the north, and your package got stuck at a facility in Wyoming because of that.",

"start": 42.434998,

"end": 56.84

},

{

"text": "But it looks like it's cleared since, and the package should be moved to New Jersey, and should be at your home address in 4 days, so it it should be arriving on Saturday.",

"start": 57.735,

"end": 71.700005

},

{

"text": "So it'll be arriving almost 2 weeks late instead of a week and a half late.",

"start": 72.880005,

"end": 77.935

},

{

"text": "Yeah.",

"start": 77.994995,

"end": 78.395

}

],

"num_words": 84,

"start": 41.155,

"end": 78.395

},

{

"sentences": [

{

"text": "I I'm I'm really sorry about that.",

"start": 78.395,

"end": 80.555

},

{

"text": "I can see here that, you know, you you're a long time customer with us, and, you know, you've placed a lot of orders.",

"start": 81.115,

"end": 87.85

},

{

"text": "So if it's okay with you, on your next order that you placed through our website, because we messed up this time, I'd like to offer you a 40% discount on your next purchase with us.",

"start": 89.43,

"end": 101.75

},

{

"text": "Yeah.",

"start": 103.73,

"end": 104.130005

},

{

"text": "I mean, that sounds good to me.",

"start": 104.130005,

"end": 106.07

}

],

"num_words": 75,

"start": 78.395,

"end": 106.07

},

{

"sentences": [

{

"text": "Okay.",

"start": 106.29,

"end": 106.69

},

{

"text": "That's good.",

"start": 106.69,

"end": 107.490005

},

{

"text": "Okay.",

"start": 107.490005,

"end": 107.810005

},

{

"text": "Great.",

"start": 107.810005,

"end": 108.265

},

{

"text": "Okay.",

"start": 110.265,

"end": 110.765

}

],

"num_words": 6,

"start": 106.29,

"end": 110.765

},

{

"sentences": [

{

"text": "Your, yeah, your shoes should be there on Saturday, this coming Saturday.",

"start": 111.385,

"end": 116.284996

},

{

"text": "Is there anything else I can help you with at this time?",

"start": 117.14001,

"end": 120.76

},

{

"text": "No.",

"start": 121.22,

"end": 121.54

},

{

"text": "Thank you.",

"start": 121.54,

"end": 122.26

},

{

"text": "Okay.",

"start": 122.26,

"end": 122.58

}

],

"num_words": 28,

"start": 111.385,

"end": 122.58

},

{

"sentences": [

{

"text": "Great.",

"start": 122.58,

"end": 122.9

},

{

"text": "And I added the discount to your account, so it should populate shortly.",

"start": 122.9,

"end": 126.641

},

{

"text": "Thanks.",

"start": 127.201,

"end": 127.701

},

{

"text": "Thank you.",

"start": 127.840996,

"end": 128.561

},

{

"text": "Bye.",

"start": 128.561,

"end": 129.061

}

],

"num_words": 18,

"start": 122.58,

"end": 129.061

}

]

}

}

]

}

]

}

}[Speaker 0]: Hi. Thank you so much for calling Nike. This is Allison. How can I help you today? timestamp: 1.83-6.33

[Speaker 1]: Hey. I was supposed to receive a shoe order last Tuesday, and it's now Wednesday, a week later. So, like, what's going on? timestamp: 7.25-22.16

[Speaker 0]: Oh, okay. I see. Could I have your order number, please? timestamp: 22.16-26.93

[Speaker 1]: Yeah. It's 905933 679. timestamp: 27.55-28.77

[Speaker 0]: Okay. Thank you so much for that. Okay. I apologize. timestamp: 32.46-41.89

[Speaker 0]: It looks like your order there's been some inclement weather in, like, the Midwest especially in the north. And your package got stuck at a facility in Wyoming because of that. But it looks like it's cleared since, and the package should be moved to New Jersey. It should be at your home address in 4 days. So it it should be arriving on Saturday. timestamp: 42.35-71.70

[Speaker 1]: So it'll be arriving almost 2 weeks late instead of a week and a half late. timestamp: 72.8-77.85

[Speaker 0]: Yeah. I I'm I'm really sorry about that. I can see here that you know, you you're a long time customer with us, and, you know, you've placed a lot of orders. So if it's okay with you, on your next order that you placed through our website because we messed up this time, I'd like to offer you a 40% discount on your next purchase with us. timestamp: 77.99-101.67

[Speaker 1]: Yeah. I mean, that sounds good to me. timestamp: 103.65-106.07

[Speaker 0]: Okay. That's good. Okay. Great. Okay. timestamp: 106.21-110.84

[Speaker 0]: Your yeah. Your shoe should be there on Saturday, this coming Saturday. Is there anything else I can help you with at this time? timestamp: 111.38-120.76

[Speaker 1]: No. Thank you. timestamp: 121.22-122.26

[Speaker 0]: Okay. Great. And I added the discount to your account, so it should populate shortly. timestamp: 122.26-126.64

[Speaker 1]: Thanks. timestamp: 127.20-127.70

[Speaker 0]: Thank you. Bye. timestamp: 127.84-129.06

Summary: The customer calls Wham to change their payment and asks if they can do it on the phone since their sub is about to renew. The agent takes down the customer's information and updates their card information, including billing address and expiration date. The call ends with the agent thanking the customer for calling.

Transcript Hi. Thank you so much for calling Wham. My name is Ali. How can I help you today? Hey. Just trying to change the payment info on the website. since my sub is about to renew. I was wondering if you could do it on the phone. Yeah. That shouldn't be a problem. Could I have your first and last name, please? Erin Shurtz, s c h e r t z. Alright. Thank you so much, Erin. Could I also have your phone number, please? 713-899 0745. Excellent. And I'm just going to verify with your security question really quick, if you don't mind. What street did you grow up on? Cypress Avenue. Okay. Excellent. Thank you so much. And I can just go ahead and update that card info for you. So first, what is the card number? 4708 Okay. 1209. Okay. 8732. Uh-huh. 7655. Great. And could I also have your expiration date as well? February 2028. Great. And could I also have your CVC on the back? 482. Okay. Thank you. And the the billing address is still the same? Yep. Okay. Great. then you're all set. Thank you so much for calling in.

Unbeatable value, unmatched performance

Extract the most value with speech-to-text and Language AI.