Table of Contents

Flux is the first conversational speech model built for voice agents in English, unifying turn detection, interruption handling, and transcription in a single real-time architecture.

Today, it goes global.

Introducing Flux Multilingual: one conversational STT model for real-time voice agents, now in 10 languages — English, Spanish, French, German, Hindi, Russian, Portuguese, Japanese, Italian, and Dutch. Language detection, code-switching, turn detection, and interruption handling all run natively through a single streaming connection.

Extending voice agents across languages no longer means stitching together monolingual models, routing layers, and detection logic. Developers build against one conversational model and a single API, with monolingual-grade accuracy across languages.

If you’re already on Flux, it’s a one-line change: swap flux-general-en for flux-general-multi

Same API, same streaming semantics, same integration.

Flux Multilingual is supported through partner integrations with Twilio, Vapi, LiveKit, Pipecat, and Jambonz.

“Customers told us that Flux transformed what's possible for real-time voice AI agents in English. It stood to reason that Deepgram would solve this globally too. Our customers' teams no longer need to sacrifice accuracy with legacy multilingual systems, nor stitch multiple models with complex routing themselves. With Flux Multilingual, teams take the exact conversational experience they built for English and extend it across languages with a single system.”

Omar Paul VP at Twilio

Build Once, Deploy Globally

Traditional approaches to multilingual voice systems often rely on multiple components to approximate a single conversational experience, such as separate models, language handling, and system logic. Each new language adds additional infrastructure and system overhead, driving up latency and making systems harder to maintain.

Teams are forced into a tradeoff: systems that prioritize accuracy often introduce latency, while systems optimized for speed struggle to maintain conversational performance in real time.

Neither approach delivers the responsiveness and conversational performance required for production voice agents.

Flux Multilingual collapses detection, routing, and per-language models into a single conversational model and a single streaming connection. Instead of managing a system of components, developers build against one model that handles speech recognition, turn detection, language detection, and code-switching in real time.

There’s no routing logic to maintain, no orchestration layer to debug, and no tradeoff between conversational performance and global coverage.

In the Voice Agent API, Flux Multilingual pairs with the new auto_language_detection setting: configure your TTS voices by language, and the agent routes to the matching voice based on what Flux detects. That's STT and TTS coordinating through a single API configuration, without a custom language-ID service or routing layer.

“Flux Multilingual is a big step toward making global voice agents truly accessible to developers. Instead of managing multiple models and infrastructure, teams can extend the same real-time conversational experience across languages within a single system. With voice capabilities powered by Deepgram, that’s exactly the kind of simplicity developers need to scale.”

Vijay Raghunathan VP of Engineering at Vapi

“Scaling customer operations across languages in financial services often requires additional systems, routing logic, and operational oversight. In regulated environments, that quickly becomes difficult to manage. Flux Multilingual makes it possible to extend those workflows across markets without re-architecting the stack.”

Danai Antoniou Chief Scientist and Co-Founder at Gradient Labs

“For teams building and deploying voice applications, maintaining control without introducing additional complexity has always been a challenge. Multilingual systems often force tradeoffs between latency, accuracy, and operational overhead. Flux Multilingual changes that by enabling conversational speech recognition that works across languages in real time.”

Dave Horton Founder at Jamboz

Monolingual-Grade Accuracy with Real-Time Language Control

Flux Multilingual delivers accuracy that was previously only achievable with dedicated monolingual models.

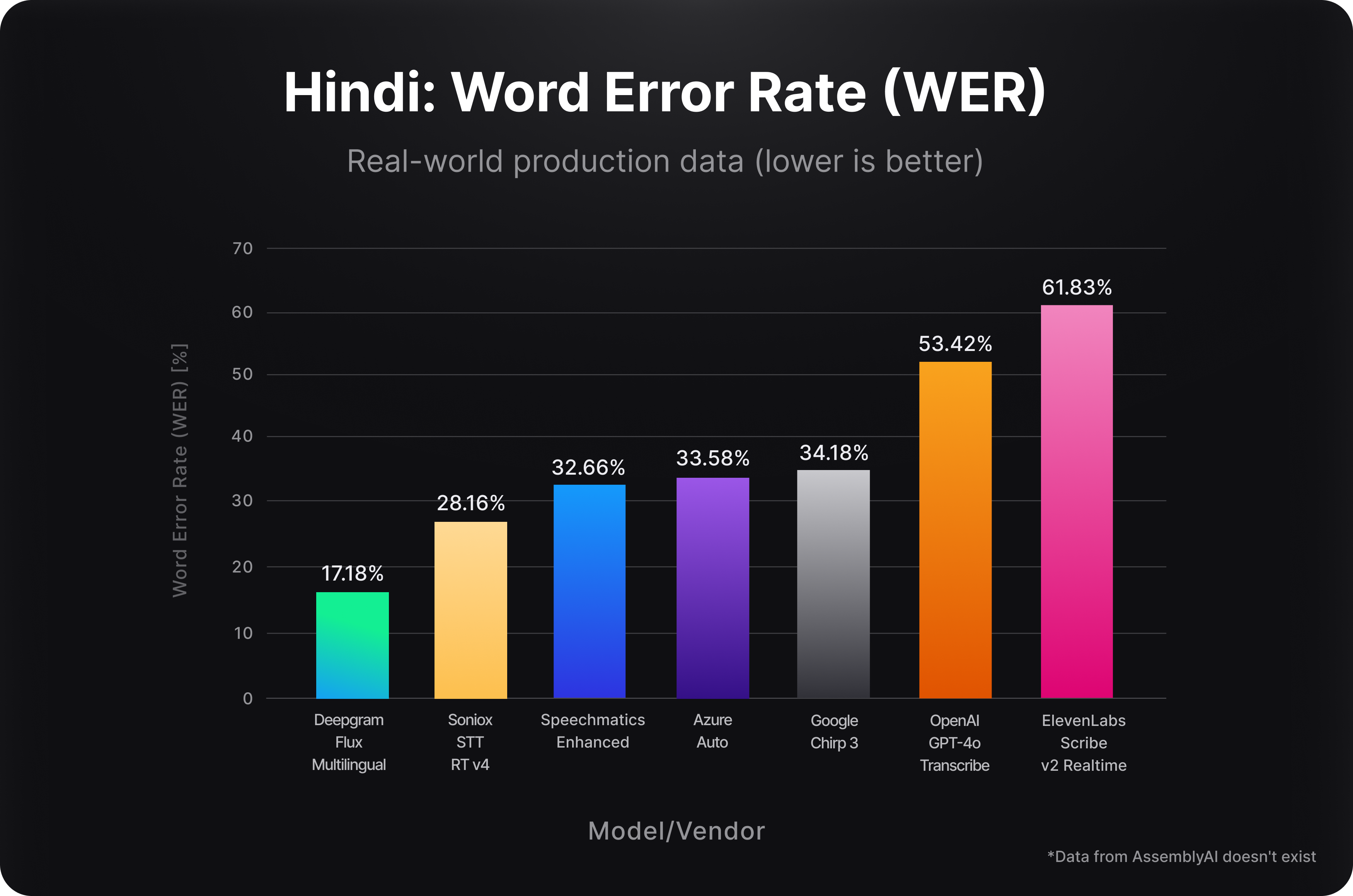

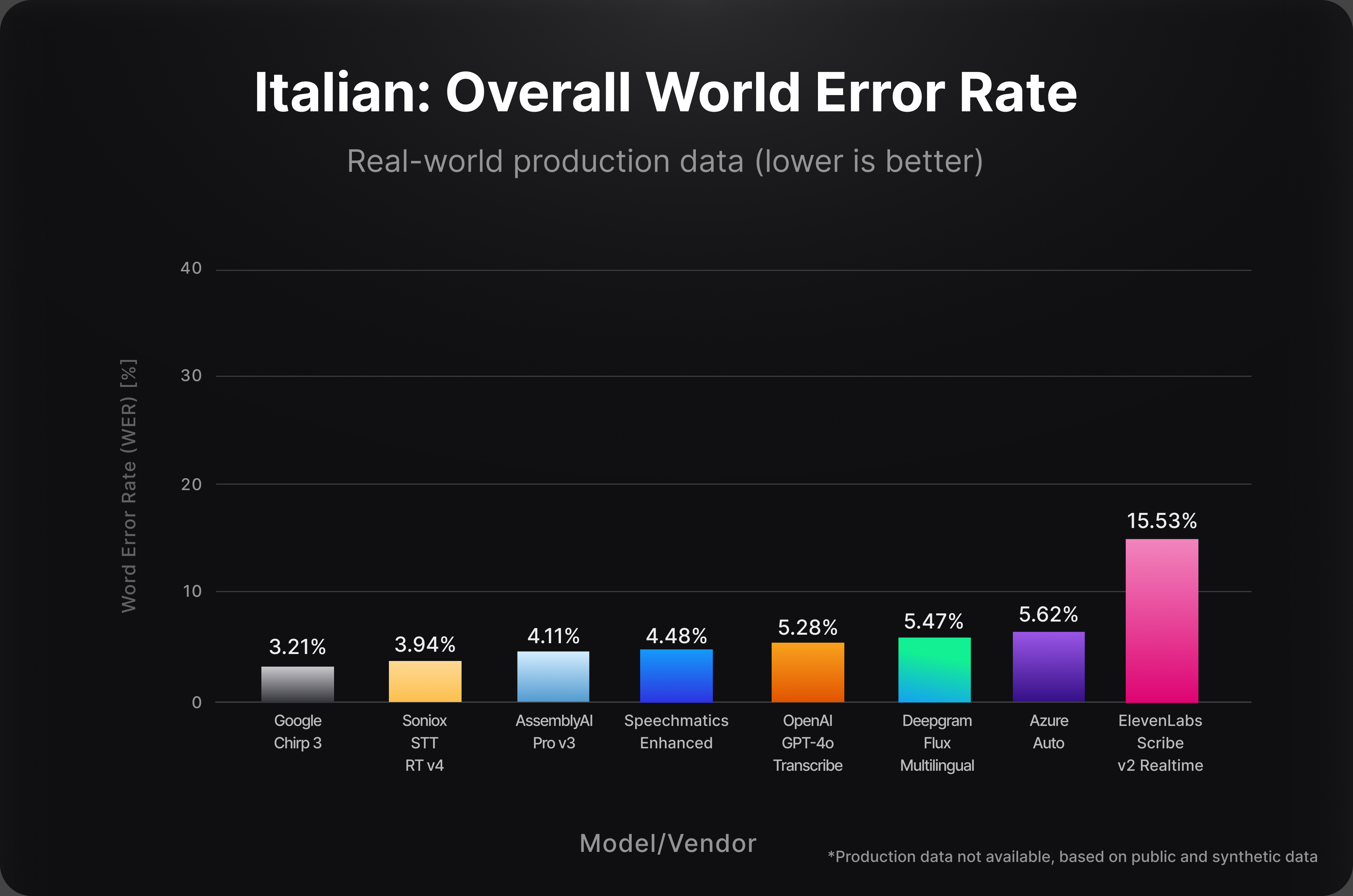

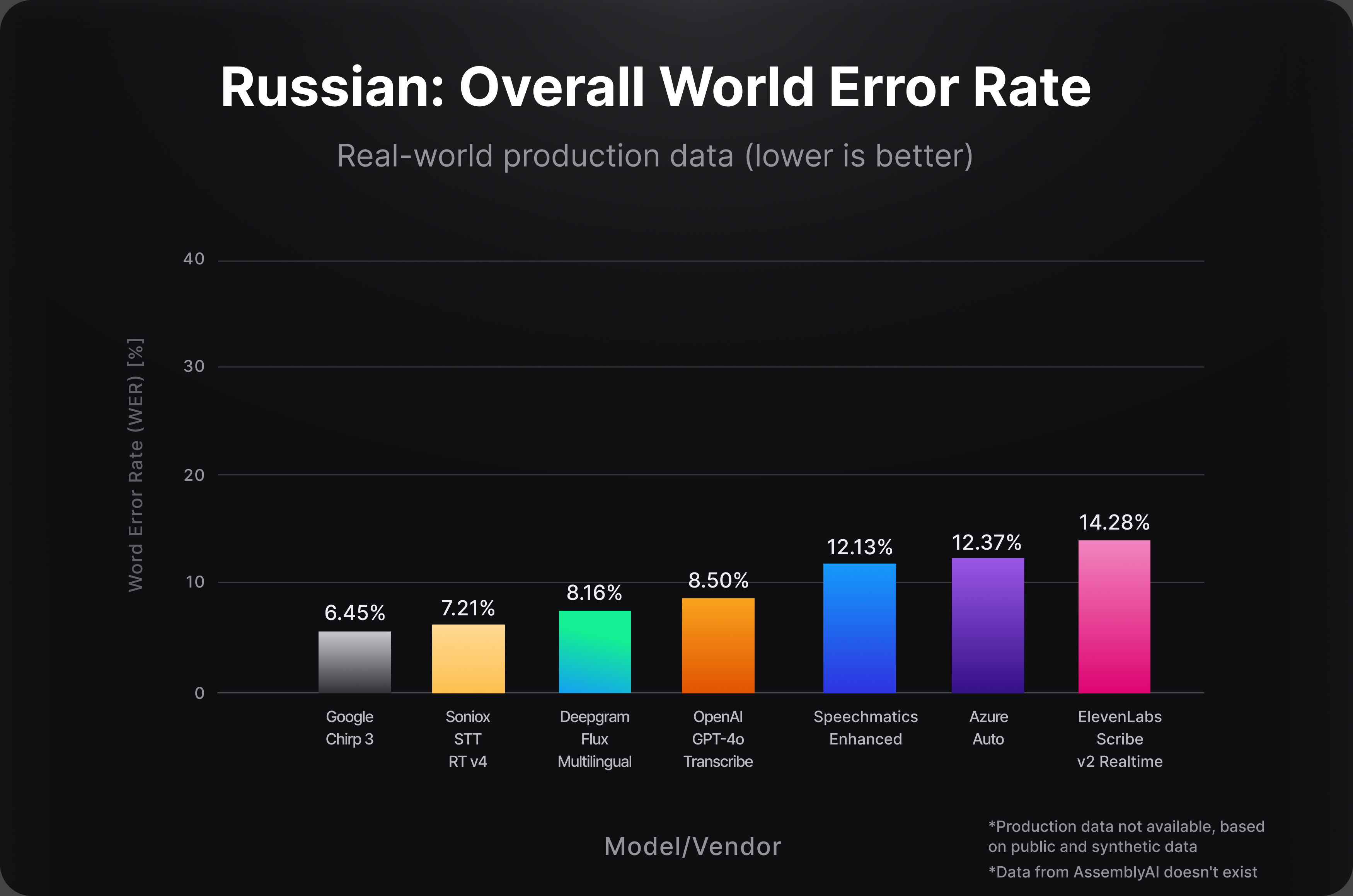

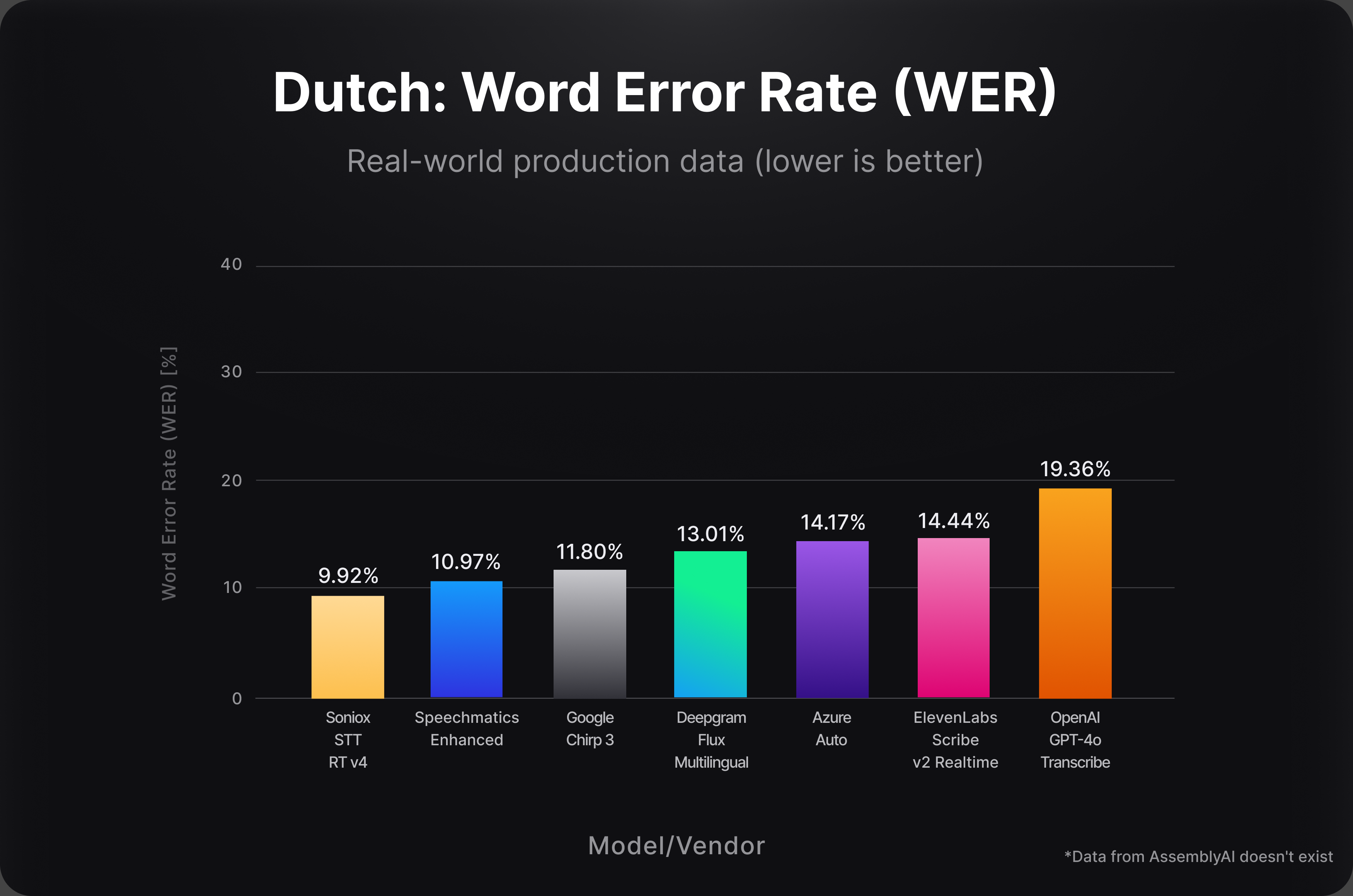

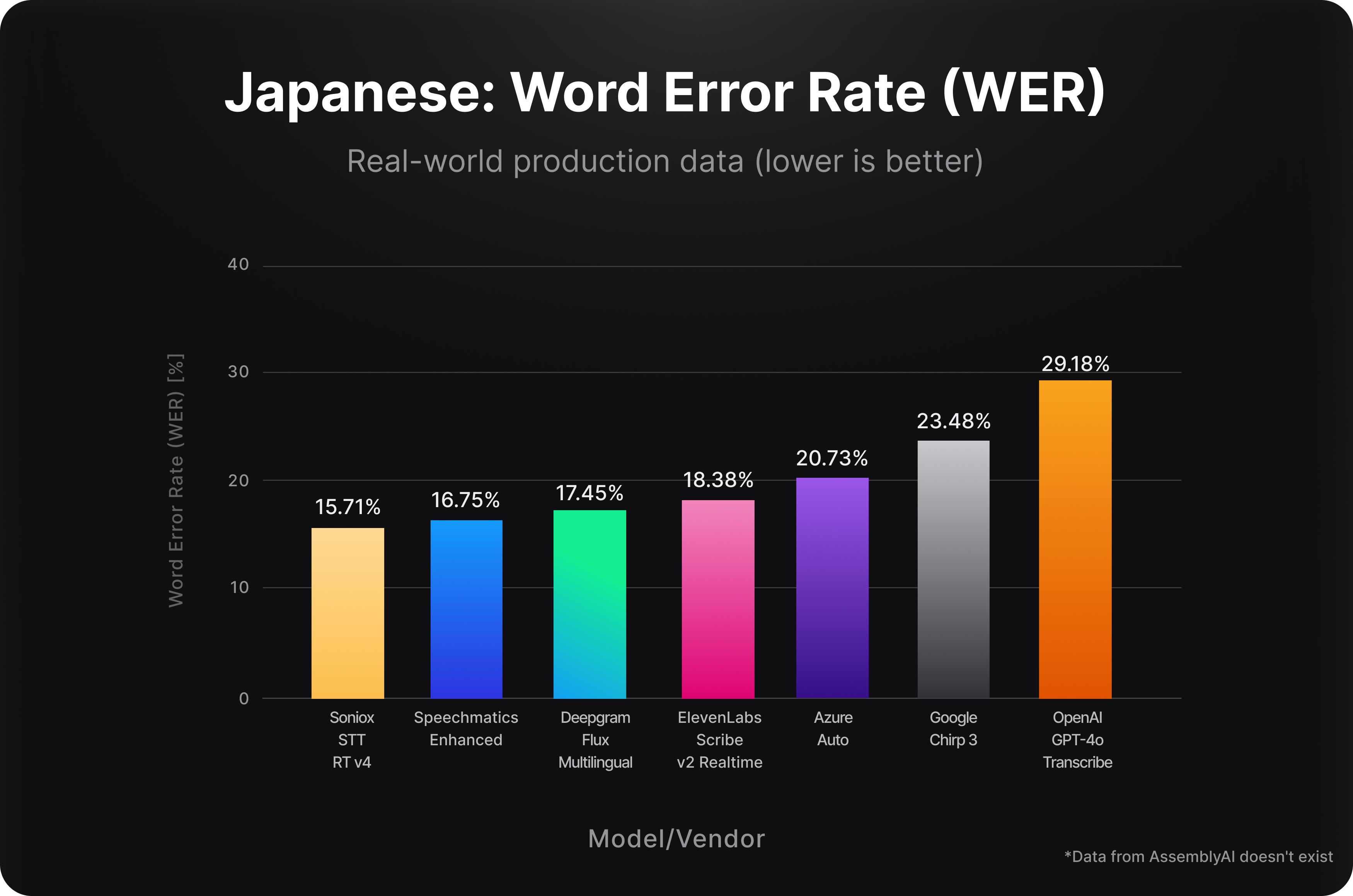

To evaluate performance, we benchmarked Flux Multilingual on real-world production audio across all supported languages, measuring word error rate (WER) using each vendor’s default streaming configuration. Each system processed complete, unmodified audio to reflect how these models perform in production environments.

Across these benchmarks, Flux Multilingual delivers best-in-class WER across the majority of supported languages on real-world production audio, including English, Spanish, German, French, Portuguese, and Hindi. Per-language results are shown below.

Flux Multilingual gives developers precise control over how language is handled.

At the center is a single parameter: language_hint

A language hint tells the model which language to expect, delivering accuracy that was previously only achievable with monolingual models. Providing multiple language hints narrows the search space for multilingual environments, like global contact centers, while still allowing the model to switch between languages in real time.

This enables four core patterns:

- Known language → maximize accuracy by hinting a single language

- Known language set → constrain detection without routing logic

- Unknown language(s) → let Flux automatically detect

- Mixed-language conversations → native code-switching

A caller might say:

“I need help with my cuenta.” — switching from English to Spanish within the same sentence (“cuenta” meaning “account”)

With traditional systems, that interaction often leads to errors or degraded performance. Language detection must resolve to a dominant language, and systems may misclassify mixed-language input or fall back, introducing errors, latency, or both.

With Flux Multilingual, this is handled natively. There’s no model switching, no detection errors, and no need for additional system logic.

Each turn of the response returns a languages array identifying which languages were detected, providing per-turn granularity that competitors only offer at the utterance level.

When the language is unknown, Flux Multilingual automatically detects it from audio and continues to adapt in real time, even mid-sentence.

Language behavior isn’t fixed at connection time.

Language settings are reconfigurable mid-conversation without reconnecting. This allows systems to detect a caller’s language on the first turn, then optimize for it across the rest of the interaction.

“Evaluating multilingual voice agents consistently, especially in code-switching scenarios, has been one of the hardest problems. Flux Multilingual gives us one conversational model, making it easier to test, validate, and trust performance at scale.”

Brooke Hopkins Founder at Coval

“The difference between a good assistant and a great one is how well it keeps up with real conversations (not robotic ones). In multilingual settings that usually breaks down, which means missed utterances, language switching, and the natural flow of the conversation dies. Flux Multilingual keeps it going, just like talking to a friend or colleague.”

Lindy Drope Head of Sales at Lindy AI

Ultra-Low Latency Conversational Speech Recognition

Multilingual systems have historically forced tradeoffs between latency, accuracy, and conversational flow. Improving one of these dimensions often comes at the expense of another. Faster systems sacrifice accuracy, while more accurate systems introduce delay. Multilingual systems struggle to maintain both.

Flux Multilingual eliminates those tradeoffs.

- Interruption handling is native, preserving natural conversational flow across languages

- Streaming latency stays consistently low for real-time interaction in multilingual scenarios

- Accuracy remains on par with dedicated single-language models, across every supported language

This allows developers to extend conversational systems globally without degrading responsiveness, accuracy, or user experience.

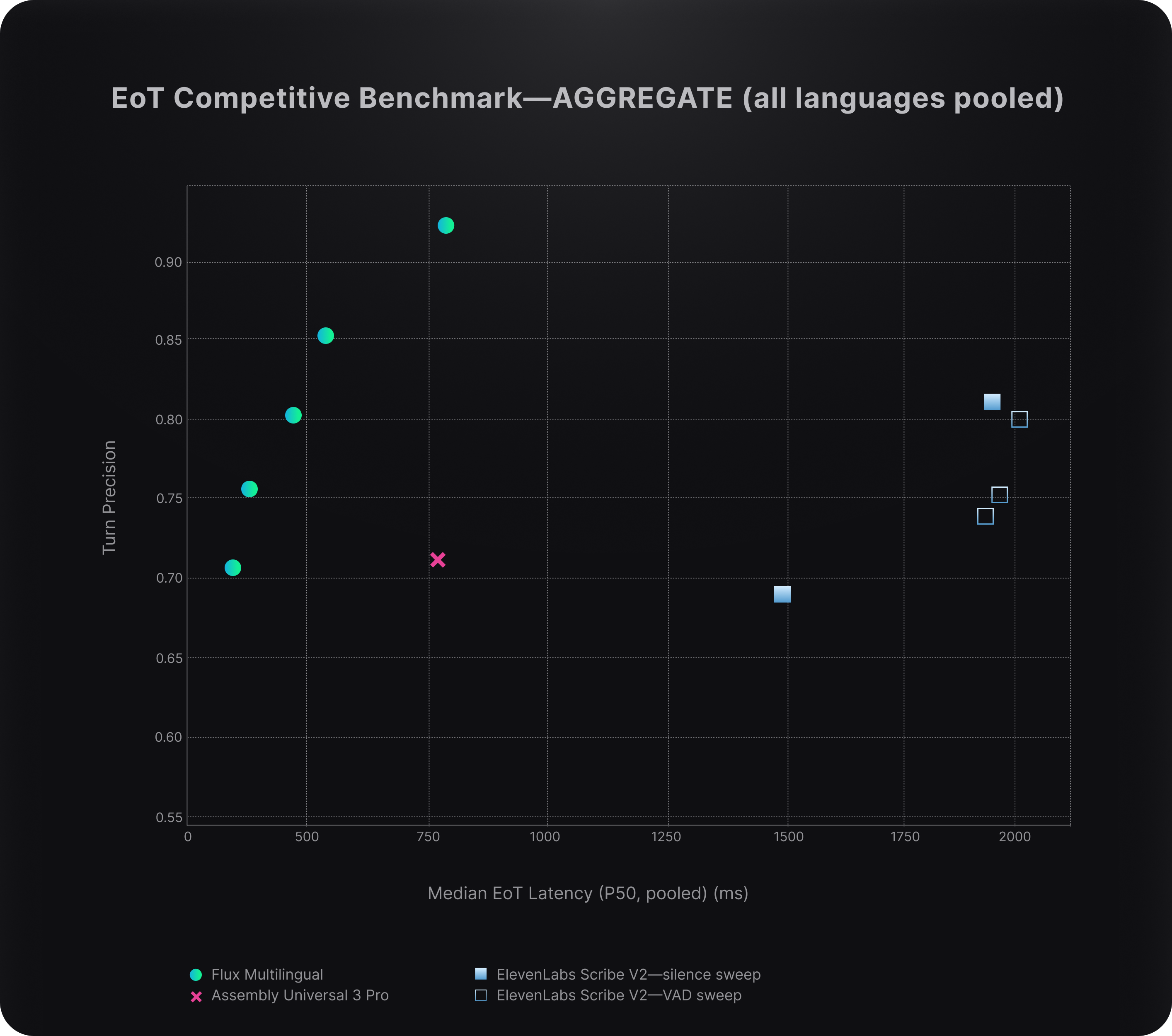

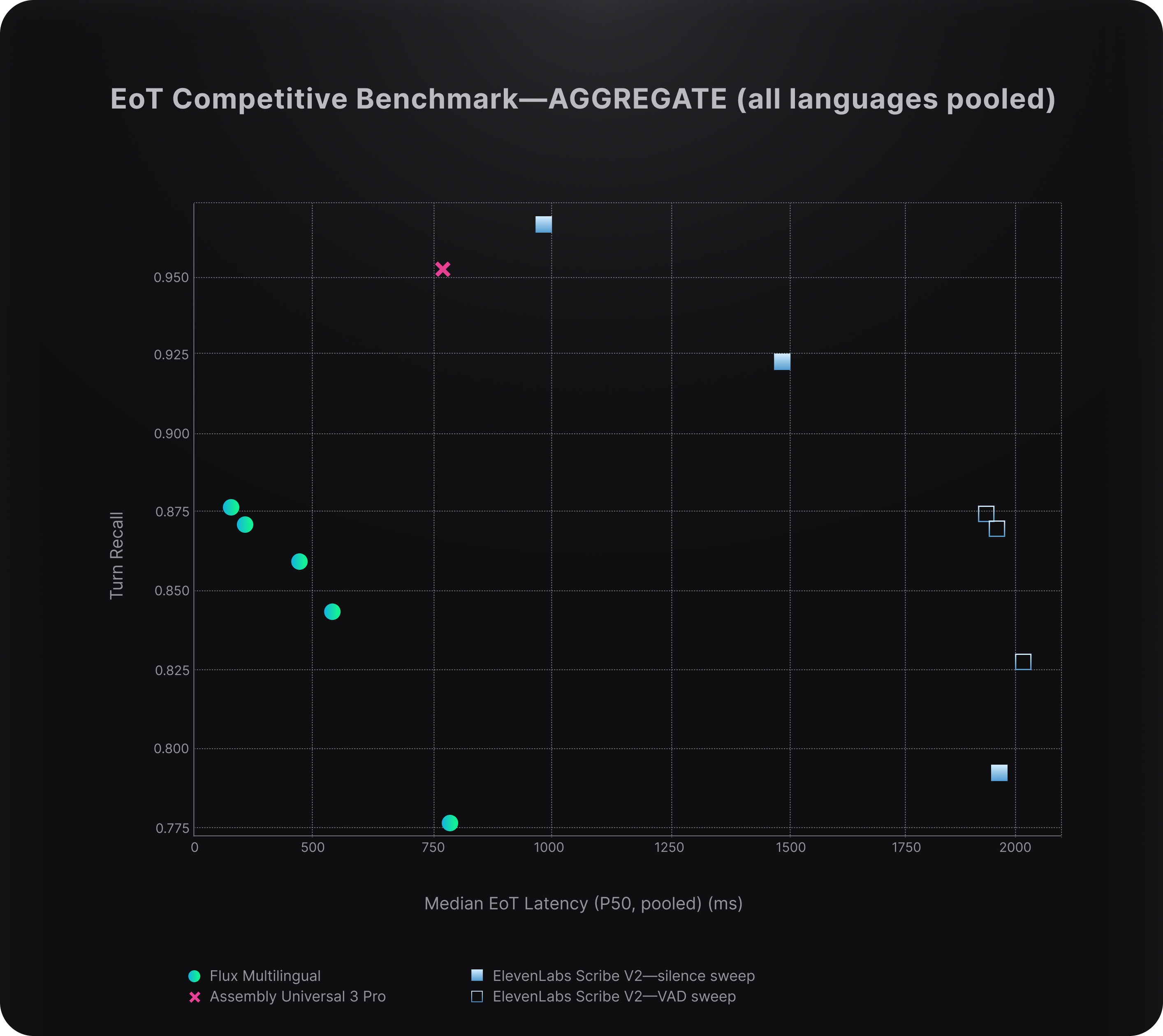

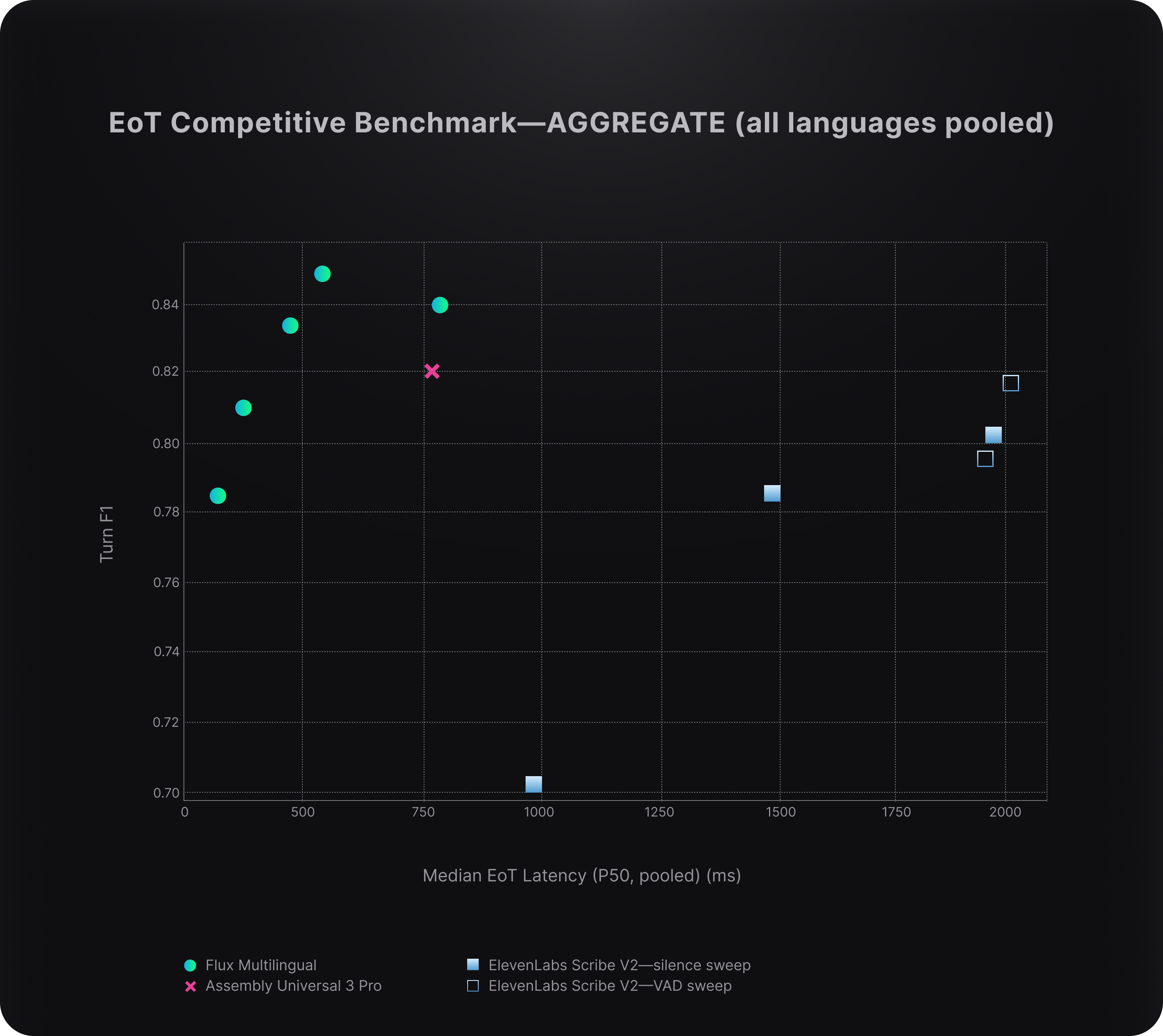

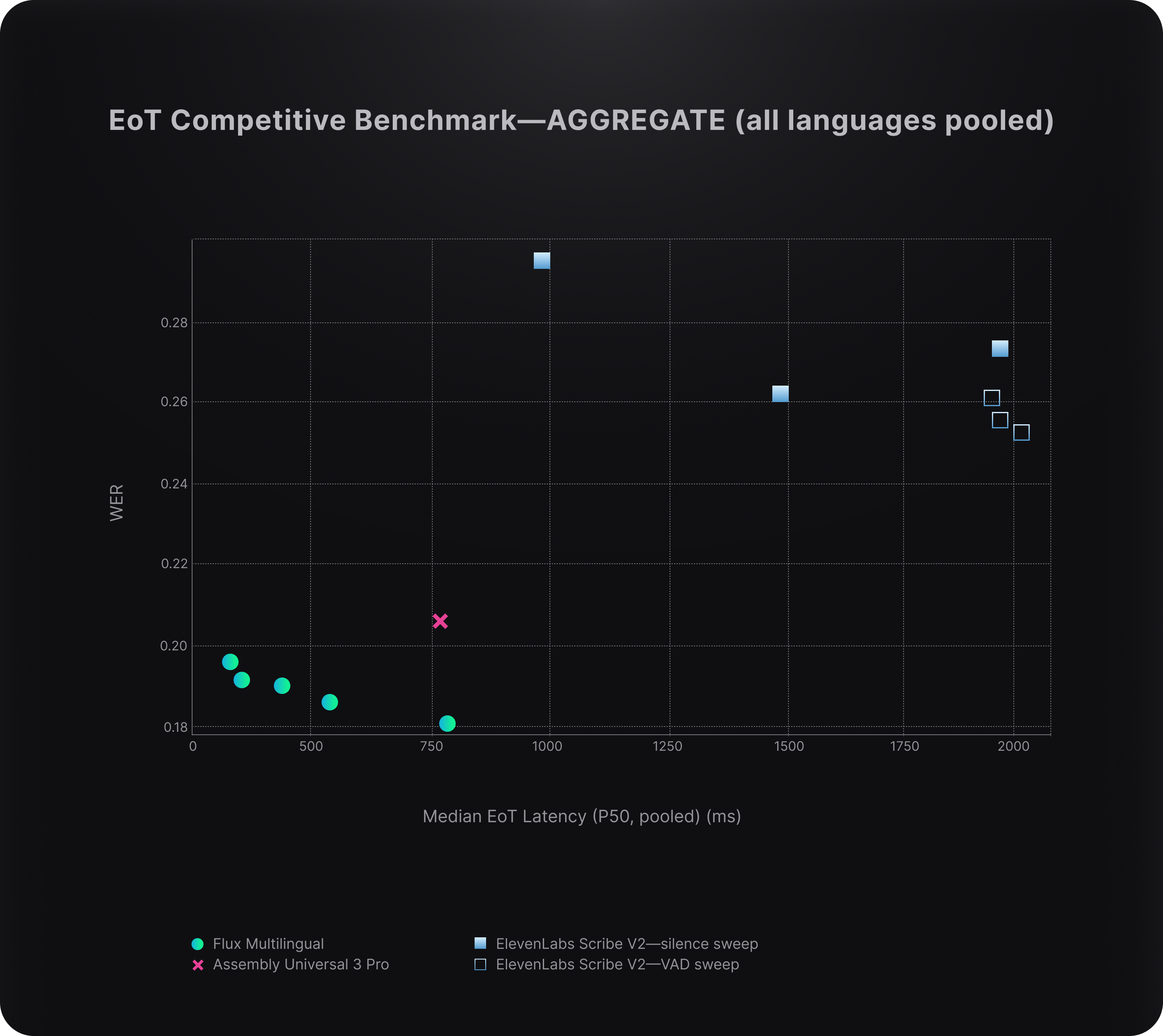

To evaluate conversational performance, we benchmarked end-of-turn (EoT) accuracy and latency on real-world production audio across all supported languages, measuring F1 score and median latency under each vendor’s default or recommended configurations. This captures how well each system determines when a speaker has finished speaking, a critical requirement for real-time voice agents.

Across these benchmarks, Flux Multilingual delivers:

- Highest aggregate EoT F1 across all supported languages

- Up to 3x lower latency than competing real-time EoT systems

This advantage comes from how turn detection is handled. Instead of relying on silence thresholds, Flux uses a learned confidence signal that understands conversational context. Because it's a confidence signal, Flux exposes it as a tunable threshold, letting developers dial toward faster responses or more conservative end-of-turn decisions per use case.

Competitors relying on silence-based approaches face a tradeoff between speed and precision. Reducing latency increases false triggers, while improving precision introduces delay. Flux avoids this tradeoff, delivering consistently strong performance across all supported languages.

For voice agents, this is the difference between reacting to silence and understanding conversation.

The full results are shown below.

“Handling conversation flow correctly is one of the hardest parts of building voice agents, and it only gets more complex across languages. With real-time voice capabilities powered by Deepgram, extending turn-aware conversational behavior into multilingual interactions gives developers a much stronger foundation to build on.”

Kwindla Hultman Kramer CEO at Daily

“In financial services, every conversation carries real consequences, which makes accuracy and consistency non-negotiable. Flux Multilingual gives us more control over language behavior while adapting in real time, which is critical for delivering reliable multilingual experiences at scale.”

German Attanasio CTO at Moveo.AI

Deployment: Cloud API or Self-Hosted

Flux Multilingual is available in two deployment modes, using the same API and integration model:

Cloud API (Deepgram-hosted)

- Fastest way to get started

- Fully managed infrastructure with global and regional endpoints

- Ideal for most production voice applications

Self-Hosted (customer-operated)

- Run Flux Multilingual in your own environment

- Audio never leaves your infrastructure

- Designed for strict data residency, privacy, security, or latency requirements

Both deployment options use the same API, streaming semantics, and SDKs.

Try Flux Multilingual Today

Flux Multilingual is now generally available. The model supports English, Spanish, French, German, Hindi, Russian, Portuguese, Japanese, Italian, and Dutch. Additional languages will continue to be added in future releases.

As part of the launch, we’re offering a limited-time promotional rate on streaming speech-to-text, including Flux Multilingual and Nova-3 models.

Flux Multilingual is supported through partner integrations with Twilio, Vapi, LiveKit, Pipecat, and Jambonz.

Get started with Flux Multilingual via the Deepgram API for streaming speech-to-text, or through the Voice Agent API for end-to-end voice agents.

Already using Flux EN? Change your model to flux-general-multi. Same API, same integration.

Start building today:

- See the live demo →

- Try it in the Playground →

- Get started with the API →

- Build end-to-end with the Voice Agent API →

- Sign up for an API key →

- Explore the documentation →

- Join our Discord community →

Conversational STT for voice agents, now in every language your customers speak. Build globally with Flux Multilingual, without rebuilding your stack.