Introducing New Audio Intelligence Models for Sentiment, Intent, and Topic Detection

TL;DR:

We’ve enhanced our Voice AI platform with new audio intelligence models that provide spoken language understanding and speech analytics of conversational interactions

Our models are faster and more efficient than LLM-based alternatives and fine-tuned using more than 60K domain-specific conversations, allowing you to accurately score sentiment, recognize caller intent, and perform topic detection at scale.

Learn more about our new sentiment analysis, intent recognition, and topic detection models on our API Documentation, product page, and upcoming product showcase webinar.

Deepgram Audio Intelligence: Extracting insights and understanding from speech

We’re excited to announce a major expansion of our Audio Intelligence lineup since the launch of our first-ever task-specific language model (TSLM) for speech summarization last summer. This release includes models for high-level natural language understanding tasks for sentiment analysis, intent recognition, and topic detection.

In contrast to the hundred billion-parameter, general-purpose large language models (LLMs) from the likes of OpenAI and Google, our models are lightweight, purpose-driven, and fine-tuned on domain and task-specific conversational data sets. The result? Superior accuracy on specialized topics, lightning-fast speed, and low inference costs that make high throughput, low latency use cases viable such as contact center and sales enablement applications.

The Deepgram Voice AI Platform

In spite of continued investment in digital transformation and adoption of omnichannel customer service strategies, voice remains the most preferred and most heavily used customer communication channel. As a result, leading service organizations are increasingly turning to AI technologies like next-gen speech-to-text, advanced speech analytics, and conversational AI agents (i.e. voicebots) to maximize the efficiency and effectiveness of this important channel.

But transcribing voice interactions with fast, efficient, accurate speech recognition is only just the beginning. Enterprises need to know more than just who said what and when; they need rich insights about what was said and why. Deriving these insights in real-time and at scale is critical to improve agent handling and optimizing the next steps in any given interaction.

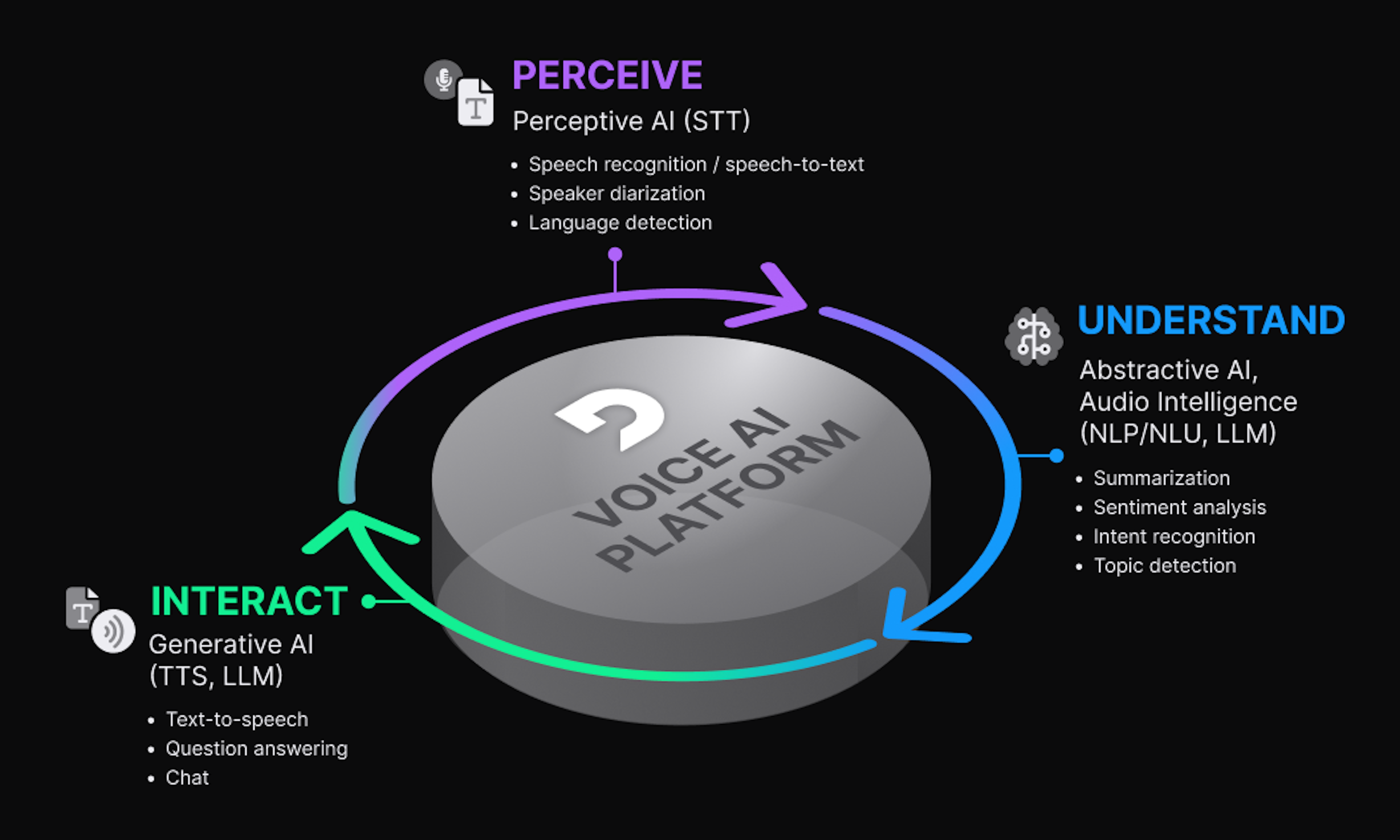

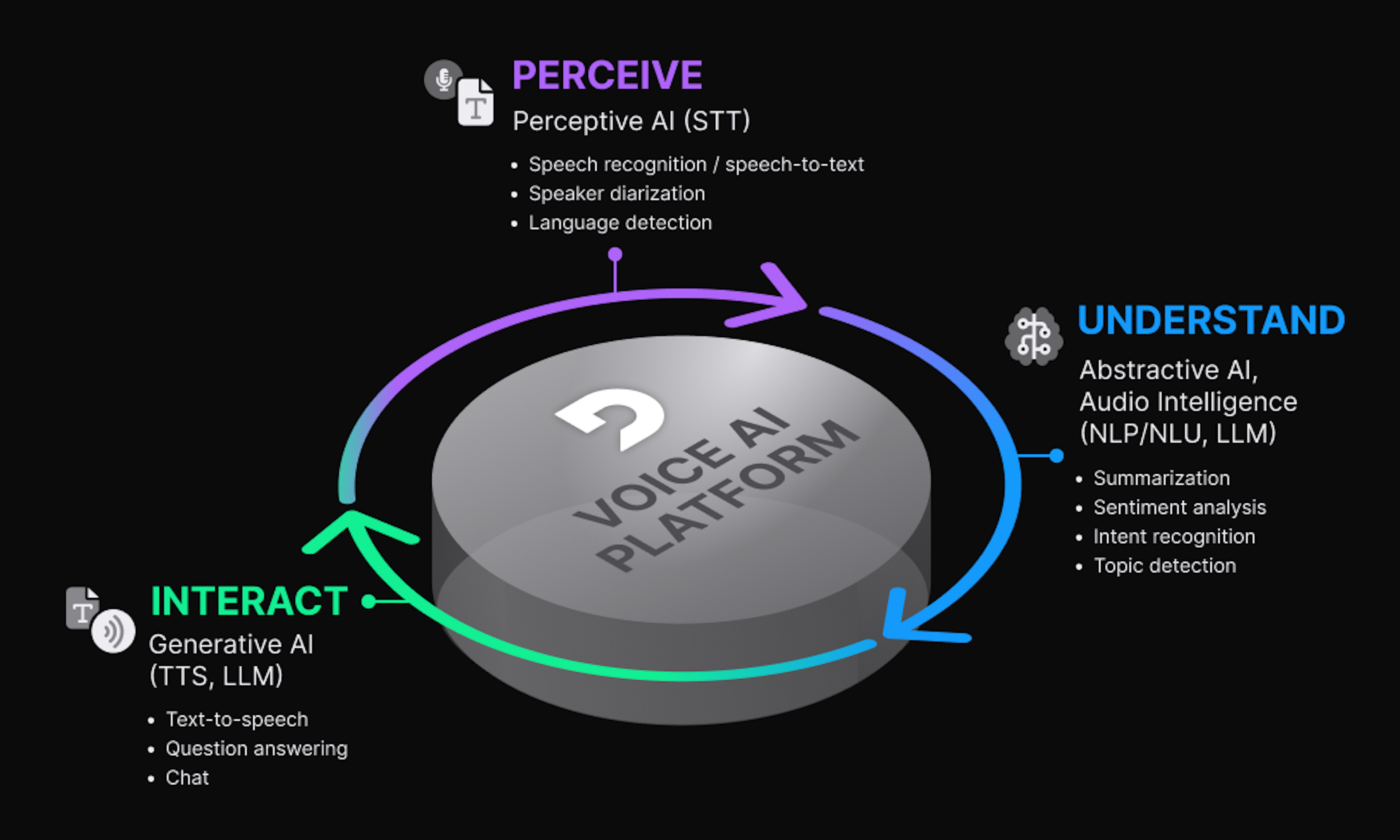

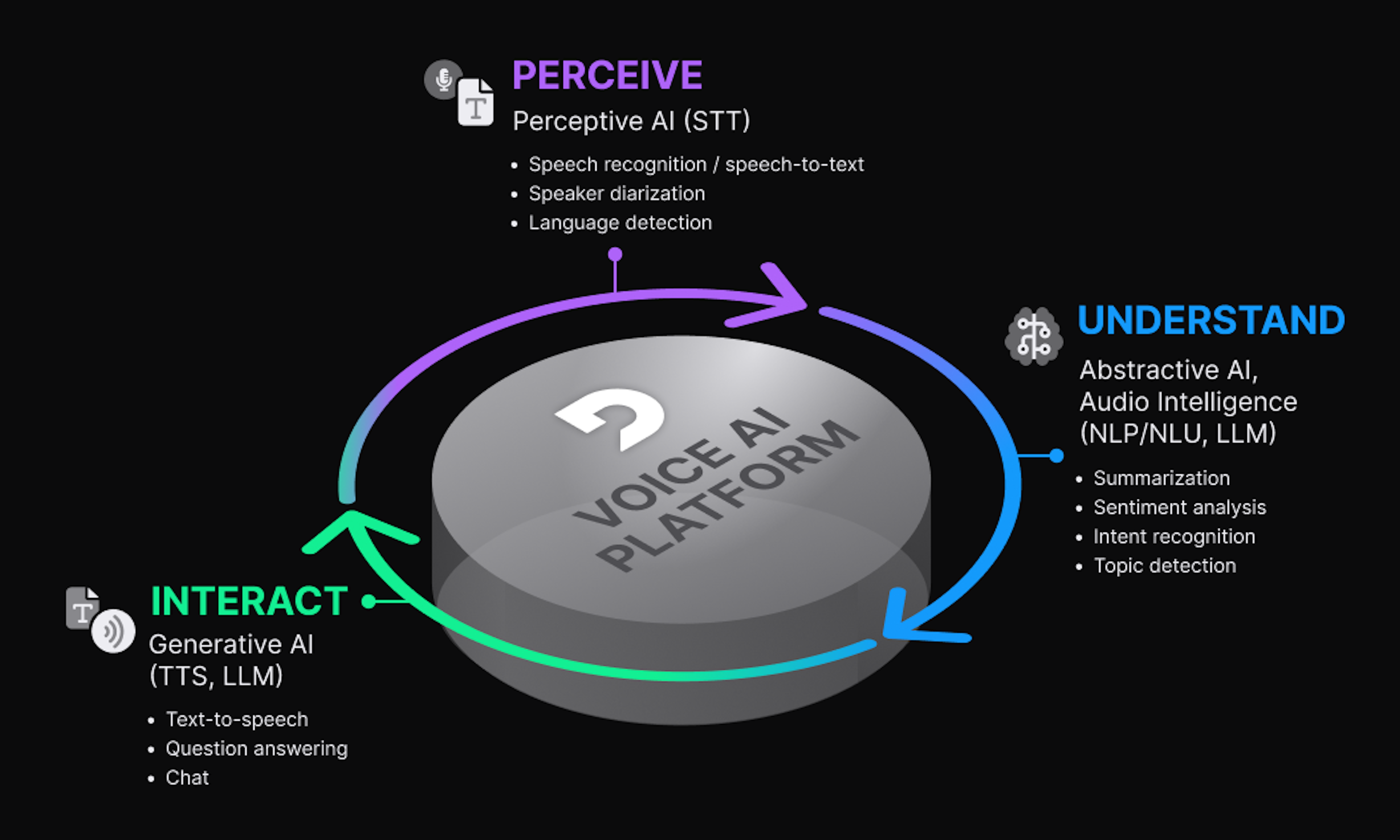

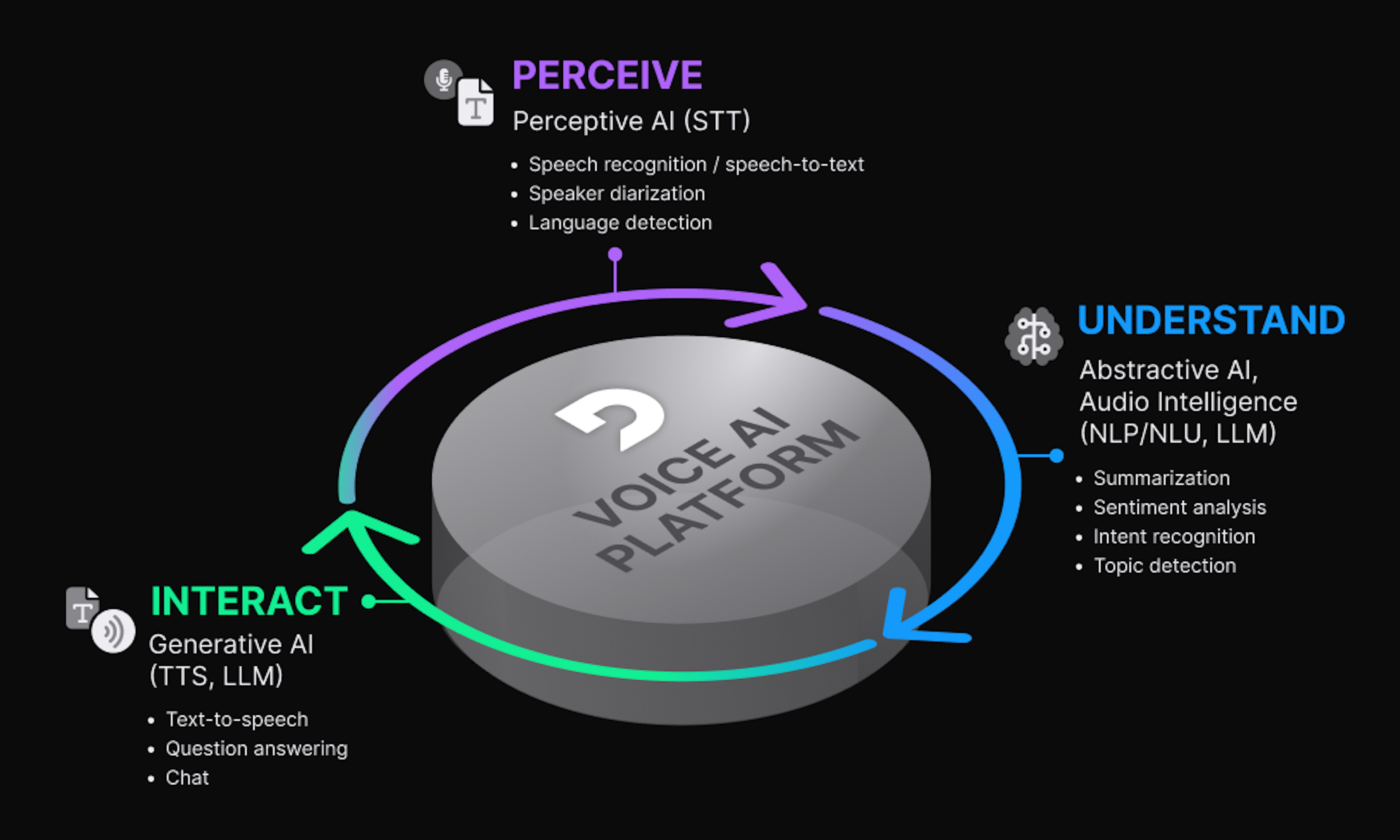

As a full stack voice AI platform provider, Deepgram is committed to developing the essential building blocks our customers need to create powerful voice AI experiences across a range of use cases. There are three main components of such a platform that correspond with the primary phases in a conversational interaction. These phases include:

Perceive: Using perceptive AI models like speech-to-text to accurately transcribe conversational audio into text.

Understand: Using abstractive AI models that implement intelligent natural and spoken language understanding tasks like summarization and sentiment analysis.

Interact: Using forms of generative AI models like text-to-speech and large language models (LLMs) to interact with human speakers just as they would with another person.

Deepgram has long been recognized in the market for its industry leading transcription capabilities. But many of our customers have been asking for speech intelligence models that execute higher-level NLU tasks such as call summarization, analyzing speaker sentiment, and detecting topics and caller intent. They strongly desire a single vendor capable of fulfilling all their voice AI needs. With today’s release, we provide world class conversational intelligence capabilities alongside our leading speech-to-text service–all from a single API.

Powering our intelligence APIs are task-specific language models (TSLMs), a form of small language model (SLM) technology that’s been specifically optimized for conversational interactions, having been trained on more than 60K high-quality conversations. This makes them contextually accurate, extremely responsive, and cost-efficient in support of real-time enterprise applications.

Deepgram Audio Intelligence

With this release, our customers can access our audio intelligence capabilities through the same API that provides the transcript using the appropriate parameters in the query string when calling Deepgram’s /listen endpoint. The API will return the corresponding intelligence results with the transcript response. Audio Intelligence models include:

Summarization - Accurately capture the essence of conversations including contextual information about the reason for calling, agent responses, and next steps to take.

Sentiment Analysis - Identify sentiment (along with confidence score) of the entire conversation as well as shifts in sentiment throughout the interaction by scoring sentiment values for every word, sentence, utterance, and paragraph.

Intent Recognition - Recognize speaker intent throughout the entire transcript, providing a list of text segments and their corresponding intent labels and confidence score.

Topic Detection - Identify key topics in the conversation, providing a list of text segments and the corresponding topics and confidence score found in each segment.

Benefits and Use Cases

The contact center sector is swiftly embracing AI for call analytics to cut costs and enhance customer satisfaction. Gartner has found that comprehending customer expectations for their brand experience is critical to improving brand loyalty and satisfaction. With customers engaging through multiple channels–from voice calls to social media and chatbots–the data generated is vast. They expect a cohesive experience, with personalized interactions that resume seamlessly across these platforms. Quickly deciphering this data is crucial for superior customer service and business growth.

Our TSLM-based models are lightweight, fast, and efficient, and they’re available through the same API used for our industry-leading speech recognition service. Our models enable developers to quickly identify trends in customer sentiment, topics, and intents, improving user experiences. They support precise agent training, highlight customer issues, enhance operational efficiency, and provide personalized, context-aware interactions based on summaries of past conversations and real-time analysis of changing topics and sentiment.

Deepgram's Audio Intelligence allows contact centers and sales platforms to efficiently extract vital insights from vast numbers of conversations. This streamlines the identification of calls requiring further analysis and follow-up, conserves time and resources, and aids in targeted agent coaching by pinpointing improvement areas. To learn more, please visit our API Documentation or visit our product page to explore the details of our Audio Intelligence models. We are also hosting a product showcase webinar where Deepgram experts will dive deep into our most recent product features including our new intelligence models. Register for free here.

If you have any feedback about this post, or anything else regarding Deepgram, we'd love to hear from you. Please let us know in our GitHub discussions or contact us to talk to one of our product experts for more information today.

Unlock language AI at scale with an API call.

Get conversational intelligence with transcription and understanding on the world's best speech AI platform.